Merge branch 'main' into feat/rig-rust

297

README.md

|

|

@ -8,7 +8,7 @@

|

|||

|

||||

Integrate the DeepSeek API into popular softwares. Access [DeepSeek Open Platform](https://platform.deepseek.com/) to get an API key.

|

||||

|

||||

English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/README_cn.md)

|

||||

English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/README_cn.md)/[日本語](https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/README_ja.md)

|

||||

|

||||

</div>

|

||||

|

||||

|

|

@ -18,6 +18,11 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

### Applications

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/171659527?s=400&u=39906ab3b6e2066f83046096a66a77fb3f8bb836&v=4" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/quantalogic/quantalogic">Quantalogic</a> </td>

|

||||

<td> QuantaLogic is a ReAct (Reasoning & Action) framework for building advanced AI agents. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/13600976/224d547a-6fbc-47c8-859f-aa14813e2b0f" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/chatbox/README.md">Chatbox</a> </td>

|

||||

|

|

@ -42,6 +47,16 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <img src="https://www.librechat.ai/librechat.svg" alt="LibreChat" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.librechat.ai/docs/configuration/librechat_yaml/ai_endpoints/deepseek">LibreChat</a> </td>

|

||||

<td> LibreChat is a customizable open-source app that seamlessly integrates DeepSeek for enhanced AI interactions. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/longevity-genie/chat-ui/11c6647c83f9d2de21180b552474ac5ffcf53980/static/geneticsgenie/icon-128x128.png" alt="Icon" width="64" height="auto"/> </td>

|

||||

<td> <a href="https://github.com/longevity-genie/just-chat">Just-Chat</a> </td>

|

||||

<td> Make your LLM agent and chat with it simple and fast!</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.papersgpt.com/images/logo/favicon.ico" alt="PapersGPT" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/papersgpt/papersgpt-for-zotero">PapersGPT</a> </td>

|

||||

<td> PapersGPT is a Zotero plugin that seamlessly with DeepSeek and other multiple AI models for quickly reading papers in Zotero. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/rss-translator/RSS-Translator/main/core/static/favicon.ico" alt="Icon" width="64" height="auto" /> </td>

|

||||

|

|

@ -83,12 +98,31 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <a href="docs/raycast/README.md">Raycast</a></td>

|

||||

<td> <a href="https://raycast.com/?via=ViGeng">Raycast</a> is a productivity tool for macOS that lets you control your tools with a few keystrokes. It supports various extensions including DeepSeek AI.</td>

|

||||

</tr>

|

||||

</tr> <td> <img src="https://niceprompt.app/favicon.ico" alt="Icon" width="64" height="auto" /> </td> <td> <a href="https://niceprompt.app">Nice Prompt</a></td> <td> <a href="https://niceprompt.app">Nice Prompt</a> Organize, share and use your prompts in your code editor, with Cursor and VSCode。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/193405629?s=200&v=4" alt="PHP Client" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-php/deepseek-php-client/blob/master/README.md">PHP Client</a> </td>

|

||||

<td> Deepseek PHP Client is a robust and community-driven PHP client library for seamless integration with the Deepseek API. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<tr>

|

||||

<td>

|

||||

<img

|

||||

src="https://github.com/tornikegomareli/DeepSwiftSeek/blob/main/logo.webp"

|

||||

alt="DeepSwiftSeek Logo"

|

||||

width="64"

|

||||

height="auto"

|

||||

/>

|

||||

</td>

|

||||

<td>

|

||||

<a href="https://github.com/tornikegomareli/DeepSwiftSeek/blob/main/README.md">DeepSwiftSeek</a>

|

||||

</td>

|

||||

<td>

|

||||

DeepSwiftSeek is a lightweight yet powerful Swift client library, pretty good integration with the DeepSeek API.

|

||||

It provides easy-to-use Swift concurrency for chat, streaming, FIM (Fill-in-the-Middle) completions, and more.

|

||||

</td>

|

||||

</tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/958072?s=200&v=4" alt="Laravel Integration" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-php/deepseek-laravel/blob/master/README.md">Laravel Integration</a> </td>

|

||||

<td> Laravel wrapper for Deepseek PHP client, to seamless deepseek API integration with laravel applications.</td>

|

||||

|

|

@ -99,8 +133,8 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <a href="https://www.zotero.org">Zotero</a> is a free, easy-to-use tool to help you collect, organize, annotate, cite, and share research.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="./docs/Siyuan/assets/image-20250122162731-7wkftbw.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/Siyuan/README.md">SiYuan</a> </td>

|

||||

<td> <img src="https://b3log.org/images/brand/siyuan-128.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/SiYuan/README.md">SiYuan</a> </td>

|

||||

<td> SiYuan is a privacy-first personal knowledge management system that supports complete offline usage, as well as end-to-end encrypted data sync.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

|

|

@ -138,11 +172,64 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <a href="https://agenticflow.ai/">AgenticFlow</a> </td>

|

||||

<td> <a href="https://agenticflow.ai/">AgenticFlow</a> is a no-code platform where marketers build agentic AI workflows for go-to-market automation, powered by hundreds of everyday apps as tools for your AI agents.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/ZGGSONG/STranslate/raw/main/img/favicon.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://stranslate.zggsong.com/en/">STranslate</a></td>

|

||||

<td> <a href="https://stranslate.zggsong.com/en/">STranslate</a>(Windows) is a ready-to-go translation ocr tool developed by WPF </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/5e16beb0-993e-47bf-807e-7c8804b313a2" alt="Asp Client" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/Anwar-alhitar/Deepseek.Asp.Client/blob/master/README.md">ASP Client</a> </td>

|

||||

<td><a href="https://github.com/Anwar-alhitar/Deepseek.Asp.Client/blob/master/README.md">Deepseek.ASPClient</a> is a lightweight ASP.NET wrapper for the Deepseek AI API, designed to simplify AI-driven text processing in .NET applications.. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.gptaiflow.tech/logo.png" alt="gpt-ai-flow-logo" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.gptaiflow.tech/docs/product/api-keys-setup#setup-deepseek-api-keys">GPT AI Flow</a></td>

|

||||

<td>

|

||||

The ultimate productivity weapon built by engineers for efficiency enthusiasts (themselves): <a href="https://www.gptaiflow.tech/">GPT AI Flow</a>

|

||||

<ul>

|

||||

<li>`Shift+Alt+Space` Wake up desktop intelligent hub</li>

|

||||

<li>Local encrypted storage</li>

|

||||

<li>Custom instruction engine</li>

|

||||

<li>On-demand calling without subscription bundling</li>

|

||||

</ul>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/b09f17a8-936d-4dac-8b24-1682d52c9a3c" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/alecm20/story-flicks">Story-Flicks</a></td>

|

||||

<td>With just one sentence, you can quickly generate high-definition story short videos, supporting models such as DeepSeek.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://prompt.16x.engineer/favicon.ico" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/16x_prompt/README.md">16x Prompt</a> </td>

|

||||

<td> <a href="https://prompt.16x.engineer/">16x Prompt</a> is an AI coding tool with context management. It helps developers manage source code context and craft prompts for complex coding tasks on existing codebases.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.petercat.ai/images/favicon.ico" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.petercat.ai">PeterCat</a> </td>

|

||||

<td> A conversational Q&A agent configuration system, self-hosted deployment solutions, and a convenient all-in-one application SDK, allowing you to create intelligent Q&A bots for your GitHub repositories.</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### AI Agent frameworks

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/smolagents/mascot_smol.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/huggingface/smolagents/tree/main"> smolagents </a> </td>

|

||||

<td> The simplest way to build great agents. Agents write python code to call tools and orchestrate other agents. Priority support for open models like DeepSeek-R1! </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><img src="https://yomo.run/yomo-logo.png" alt="Icon" width="64" height="auto" /></td>

|

||||

<td><a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/yomo/README.md">YoMo</a></td>

|

||||

<td>Stateful Serverless LLM Function Calling Framework with Strongly-typed Language Support</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/superagentxai/superagentX/refs/heads/master/docs/logo/icononly_transparent_nobuffer.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

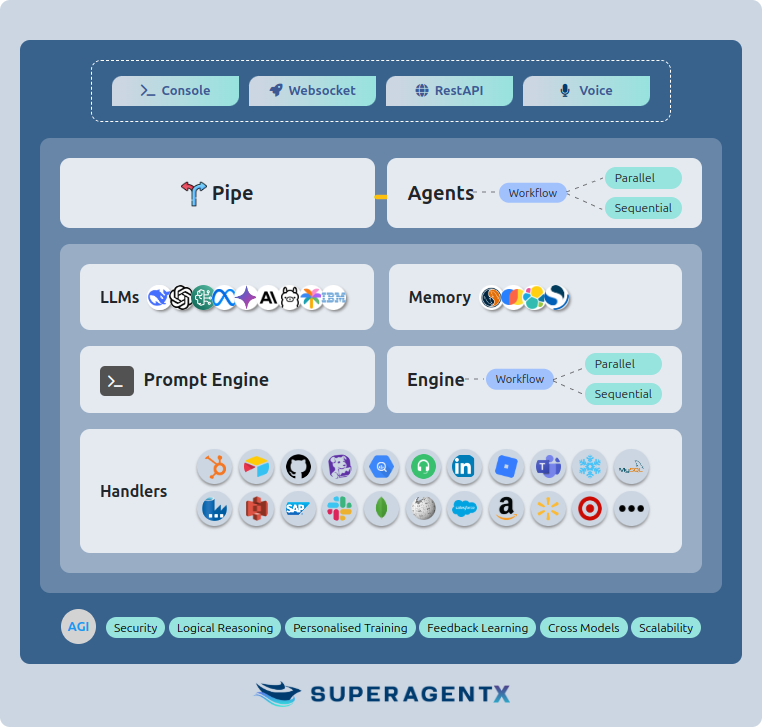

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/superagentx/README.md">SuperAgentX</a> </td>

|

||||

<td>SuperAgentX: A Lightweight Open Source AI Framework Built for Autonomous Multi-Agent Applications with Artificial General Intelligence (AGI) Capabilities.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://panda.fans/_assets/favicons/apple-touch-icon.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/anda/README.md">Anda</a> </td>

|

||||

|

|

@ -152,6 +239,26 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <img src="https://rig.rs/assets/favicon.png" alt="Icon" width="64" height="auto" alt="Rig (Rust)" /> </td>

|

||||

<td> <a href="https://rig.rs/)](https://rig.rs/">RIG</a> </td>

|

||||

<td>Build modular and scalable LLM Applications in Rust.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/longevity-genie/chat-ui/11c6647c83f9d2de21180b552474ac5ffcf53980/static/geneticsgenie/icon-128x128.png" alt="Icon" width="64" height="auto"/> </td>

|

||||

<td> <a href="https://github.com/longevity-genie/just-agents">Just-Agents</a> </td>

|

||||

<td>A lightweight, straightforward library for LLM agents - no over-engineering, just simplicity!</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://alice.fun/alice-logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/bob-robert-ai/bob/blob/main/alice/readme.md">Alice</a> </td>

|

||||

<td>An autonomous AI agent on ICP, leveraging LLMs like DeepSeek for on-chain decision-making. Alice combines real-time data analysis with a playful personality to manage tokens, mine BOB, and govern ecosystems.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/Upsonic/Upsonic/blob/9d2e6d43b44defc6744817330625661ca3a2184e/Upsonic%20pp.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/Upsonic/Upsonic">Upsonic</a> </td>

|

||||

<td>Upsonic offers a cutting-edge enterprise-ready agent framework where you can orchestrate LLM calls, agents, and computer use to complete tasks cost-effectively.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/173022229" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/APRO-com">ATTPs</a> </td>

|

||||

<td>A foundational protocol framework for trusted communication between agents. Any agents based on DeepSeek, By integrating with the <a href="https://docs.apro.com/attps">ATTPs</a> SDK, can access features such as agent registration, sending verifiable data, and retrieving verifiable data. So that it can make trusted communication with agents from other platforms. </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

|

|

@ -163,6 +270,42 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/ragflow/README.md"> RAGFlow </a> </td>

|

||||

<td> An open-source RAG (Retrieval-Augmented Generation) engine based on deep document understanding. It offers a streamlined RAG workflow for businesses of any scale, combining LLM (Large Language Models) to provide truthful question-answering capabilities, backed by well-founded citations from various complex formatted data. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/pingcap/tidb.ai/main/frontend/app/public/nextra/icon-dark.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/autoflow/README.md"> Autoflow </a> </td>

|

||||

<td> <a href="https://github.com/pingcap/autoflow">AutoFlow</a> is an open-source knowledge base tool based on GraphRAG (Graph-based Retrieval-Augmented Generation), built on <a href="https://www.pingcap.com/ai?utm_source=tidb.ai&utm_medium=community">TiDB</a> Vector, LlamaIndex, and DSPy. It provides a Perplexity-like search interface and allows easy integration of AutoFlow's conversational search window into your website by embedding a simple JavaScript snippet. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://assets.zilliz.com/Zilliz_Logo_Mark_White_20230223_041013_86057436cc.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/zilliztech/deep-searcher"> DeepSearcher </a> </td>

|

||||

<td> DeepSearcher combines powerful LLMs (DeepSeek, OpenAI, etc.) and Vector Databases (Milvus, etc.) to perform search, evaluation, and reasoning based on private data, providing highly accurate answer and comprehensive report. </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### Solana frameworks

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="./docs/solana-agent-kit/assets/sendai-logo.png" alt="Icon" width="128" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/solana-agent-kit/README.md"> Solana Agent Kit </a> </td>

|

||||

<td>An open-source toolkit for connecting AI agents to Solana protocols. Now, any agent, using any Deepseek LLM, can autonomously perform 60+ Solana actions: </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### Synthetic data curation

|

||||

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/bespokelabsai/curator/main/docs/Bespoke-Labs-Logomark-Red-crop.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/curator/README.md"> Curator </a> </td>

|

||||

<td> An open-source tool to curate large scale datasets for post-training LLMs. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/8455694b-c52e-40ec-847e-adf6a5ac064f" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/Kiln-AI/Kiln"> Kiln </a> </td>

|

||||

<td>Generate synthetic datasets and distill R1 models into custom fine-tunes. </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### IM Application Plugins

|

||||

|

|

@ -174,9 +317,14 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td>Domain knowledge assistant in personal WeChat and Feishu, focusing on answering questions.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/RockChinQ/QChatGPT/blob/master/res/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/RockChinQ/QChatGPT">QChatGPT<br/>(QQ)</a> </td>

|

||||

<td> A QQ chatbot with high stability, plugin support, and real-time networking. </td>

|

||||

<td> <img src="https://github.com/RockChinQ/LangBot/blob/master/res/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/RockChinQ/LangBot">LangBot<br/>(QQ, Lark, WeCom)</a> </td>

|

||||

<td> LLM-based IM bots framework, supports QQ, Lark, WeCom, and more platforms.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://nonebot.dev/logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/KomoriDev/nonebot-plugin-deepseek">NoneBot<br/>(QQ, Lark, Discord, TG, etc.)</a> </td>

|

||||

<td> Based on NoneBot framework, provide intelligent chat and deep thinking functions, supports QQ, Lark, Discord, TG, and more platforms.</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

|

|

@ -213,13 +361,33 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <a href="https://fluent.thinkstu.com/"> FluentRead </a> </td>

|

||||

<td> A revolutionary open-source browser translation plugin that enables everyone to have a native-like reading experience </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.ncurator.com/_next/image?url=%2Ffavicon.ico&w=96&q=75" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.ncurator.com/"> Ncurator </a> </td>

|

||||

<td> Knowledge Base AI Q&A Assistant - Let AI help you organize and analyze knowledge</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/oinzen/RSSFlow-doc/blob/main/docs/images/en/icon64.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://rssflow.oinchain.com"> RssFlow </a> </td>

|

||||

<td>An intelligent RSS reader browser extension with AI-powered RSS summarization and multi-dimensional feed views. Supports DeepSeek model configuration for enhanced content understanding. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.typral.com/_next/image?url=%2Ffavicon.ico&w=96&q=75" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.typral.com/"> Typral </a> </td>

|

||||

<td> Fast AI writer assistant - Let AI help you quickly improve article, paper, text...</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://static.trancy.org/assets/trancy_logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.trancy.org/"> Trancy </a> </td>

|

||||

<td>Immersive bilingual translation, video bilingual subtitles, sentence/word selection translation extension</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### VS Code Extensions

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/59196087/e4d082de-6f64-44b9-beaa-0de55d70cfab" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <img src="https://github.com/continuedev/continue/blob/main/docs/static/img/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/continue/README.md"> Continue </a> </td>

|

||||

<td> Continue is an open-source autopilot in IDE. </td>

|

||||

</tr>

|

||||

|

|

@ -228,26 +396,56 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/cline/README.md"> Cline </a> </td>

|

||||

<td> Meet Cline, an AI assistant that can use your CLI aNd Editor. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/Sitoi/ai-commit/refs/heads/main/images/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/Sitoi/ai-commit/blob/main/README.md"> AI Commit </a> </td>

|

||||

<td> Use AI to generate git commit messages in VS Code. </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### Visual Studio Extensions

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://merryyellow.gallerycdn.vsassets.io/extensions/merryyellow/comment2gpt/2.0.5/1739475434185/Microsoft.VisualStudio.Services.Icons.Default" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://marketplace.visualstudio.com/items?itemName=MerryYellow.Comment2GPT"> Comment2GPT </a> </td>

|

||||

<td> Use OpenAI ChatGPT, Google Gemini, Anthropic Claude, DeepSeek and Ollama through your comments </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://merryyellow.gallerycdn.vsassets.io/extensions/merryyellow/codelens2gpt/2.0.5/1739475875714/Microsoft.VisualStudio.Services.Icons.Default" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://marketplace.visualstudio.com/items?itemName=MerryYellow.CodeLens2GPT"> CodeLens2GPT </a> </td>

|

||||

<td> Use OpenAI ChatGPT, Google Gemini, Anthropic Claude, DeepSeek and Ollama through the CodeLens </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://merryyellow.gallerycdn.vsassets.io/extensions/merryyellow/uca-lite/1.4.2/1739392928984/Microsoft.VisualStudio.Services.Icons.Default" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://marketplace.visualstudio.com/items?itemName=MerryYellow.UCA-Lite"> Unity Code Assist Lite </a> </td>

|

||||

<td> Code assistance for Unity scripts </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### neovim Extensions

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/d66dfc62-8e69-4b00-8549-d0158e48e2e0" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <img src="https://github.com/user-attachments/assets/c316f70a-0a3c-4a32-b148-4df15e609acc" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/avante.nvim/README.md"> avante.nvim </a> </td>

|

||||

<td> avante.nvim is an open-source autopilot in IDE. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/d66dfc62-8e69-4b00-8549-d0158e48e2e0" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/llm.nvim/README.md"> llm.nvim </a> </td>

|

||||

<td> A free large language model(LLM) plugin that allows you to interact with LLM in Neovim. Supports any LLM, such as Deepseek, GPT, GLM, Kimi or local LLMs (such as ollama). </td>

|

||||

<td> A free large language model (LLM) plugin that allows you to interact with LLM in Neovim. Supports any LLM, such as Deepseek, GPT, GLM, Kimi or local LLMs (such as ollama). </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/d66dfc62-8e69-4b00-8549-d0158e48e2e0" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/codecompanion.nvim/README.md"> codecompanion.nvim </a> </td>

|

||||

<td> AI-powered coding, seamlessly in Neovim. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/d66dfc62-8e69-4b00-8549-d0158e48e2e0" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/minuet-ai.nvim/README.md"> minuet-ai.nvim </a> </td>

|

||||

<td> Minuet offers code completion as-you-type from popular LLMs including Deepseek, OpenAI, Gemini, Claude, Ollama, Codestral, and more. </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### JetBrains Extensions

|

||||

|

|

@ -264,7 +462,7 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td>Onegai Copilot is an AI coding assistant in JetBrain's IDE. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/59196087/e4d082de-6f64-44b9-beaa-0de55d70cfab" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <img src="https://github.com/continuedev/continue/blob/main/docs/static/img/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/continue/README.md"> Continue </a> </td>

|

||||

<td> Continue is an open-source autopilot in IDE. </td>

|

||||

</tr>

|

||||

|

|

@ -280,13 +478,28 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

</tr>

|

||||

</table>

|

||||

|

||||

### Cursor

|

||||

### Discord Bots

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://geneplore.com/img/geneplore_color_logo_circular.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/Geneplore AI/README.md"> Geneplore AI </a> </td>

|

||||

<td> Geneplore AI runs one of the largest AI Discord bots, now with Deepseek v3 and R1. </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### Native AI Code Editor

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://global.discourse-cdn.com/flex020/uploads/cursor1/original/2X/a/a4f78589d63edd61a2843306f8e11bad9590f0ca.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.cursor.com/"> Cursor </a> </td>

|

||||

<td>The AI Code Editor</td>

|

||||

<td>The AI Code Editor based on VS Code</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://exafunction.github.io/public/images/windsurf/windsurf-app-icon.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://codeium.com/windsurf"> WindSurf </a> </td>

|

||||

<td>Another AI Code Editor based on VS Code by Codeium</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

|

|

@ -305,9 +518,34 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

</tr>

|

||||

</table>

|

||||

|

||||

### Security

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/lukehinds/awesome-deepseek-integration/blob/codegate/docs/codegate/assets/codegate.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/stacklok/codegate/"> CodeGate </a> </td>

|

||||

<td> CodeGate: secure AI code generation</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### Others

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td style="font-size: 64px">🐠</td>

|

||||

<td> <a href="https://github.com/lunary-ai/abso/blob/main/README.md"> Abso </a></td>

|

||||

<td>TypeScript SDK to interact with any LLM provider using the OpenAI format.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://i.imgur.com/IsQYInJ.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/djcopley/ShellOracle/"> ShellOracle </a> </td>

|

||||

<td> A terminal utility for intelligent shell command generation. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/178783630?s=200&v=4" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/bolna-ai/bolna/"> Bolna </a> </td>

|

||||

<td> Use DeepSeek as the LLM for conversational voice AI agents</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/59196087/c1e47b01-1766-4f7e-bfe6-ab3cb3991c30" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/tree/main/docs/siri_deepseek_shortcut"> siri_deepseek_shortcut </a> </td>

|

||||

|

|

@ -318,6 +556,11 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <a href="https://github.com/rubickecho/n8n-deepseek"> n8n-nodes-deepseek </a> </td>

|

||||

<td> An N8N community node that supports direct integration with the DeepSeek API into workflows. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://framerusercontent.com/images/TSKshn2UFdTyvUi85EDMIXrXgs.png?scale-down-to=512" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/Portkey-AI/gateway"> Portkey AI </a> </td>

|

||||

<td> Portkey is a unified API for interacting with over 1600+ LLM models, offering advanced tools for control, visibility, and security in your DeepSeek apps. Python & Node SDK available. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://framerusercontent.com/images/8rF2JOaZ8l9AvM4H6ezliw44aI.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/BerriAI/litellm"> LiteLLM </a> </td>

|

||||

|

|

@ -328,14 +571,34 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

|||

<td> <a href="https://github.com/mem0ai/mem0"> Mem0 </a> </td>

|

||||

<td> Mem0 enhances AI assistants with an intelligent memory layer, enabling personalized interactions and continuous learning over time. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://geneplore.com/img/geneplore_color_logo_circular.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://geneplore.com/bot"> Geneplore AI </a> </td>

|

||||

<td> Geneplore AI runs one of the largest AI Discord bots, now with Deepseek v3 and R1. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.promptfoo.dev/img/logo-panda.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/promptfoo/README.md"> promptfoo </a> </td>

|

||||

<td> Test and evaluate LLM prompts, including DeepSeek models. Compare different LLM providers, catch regressions, and evaluate responses. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> </td>

|

||||

<td> <a href="https://github.com/AndersonBY/deepseek-tokenizer"> deepseek-tokenizer </a> </td>

|

||||

<td> An efficient and lightweight tokenization library for DeepSeek models, relying solely on the `tokenizers` library without heavy dependencies like `transformers`. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://langfuse.com/icon.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://langfuse.com/docs/integrations/deepseek"> Langfuse </a> </td>

|

||||

<td> Open-source LLM observability platform that helps teams collaboratively debug, analyze, and iterate on their DeepSeek applications. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> CR </td>

|

||||

<td> <a href="https://github.com/hustcer/deepseek-review"> deepseek-review </a> </td>

|

||||

<td> 🚀 Sharpen Your Code, Ship with Confidence – Elevate Your Workflow with Deepseek Code Review 🚀 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="http://gptlocalhost.com/wp-content/uploads/2025/01/icon_1024.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://youtu.be/T1my2gqi-7Q"> GPTLocalost </a> </td>

|

||||

<td> Use DeepSeek-R1 in Microsoft Word Locally. No inference costs. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/suqicloud/wp-ai-chat/raw/main/ic_logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/suqicloud/wp-ai-chat"> WordPress ai助手 </a> </td>

|

||||

<td> Docking Deepseek api for WordPress site ai conversation assistant, post generation, post summary plugin. </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

|

|

|||

166

README_cn.md

|

|

@ -8,7 +8,7 @@

|

|||

|

||||

将 DeepSeek 大模型能力轻松接入各类软件。访问 [DeepSeek 开放平台](https://platform.deepseek.com/)来获取您的 API key。

|

||||

|

||||

[English](https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/README.md)/简体中文

|

||||

[English](https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/README.md)/简体中文/[日本語](https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/README_ja.md)

|

||||

|

||||

</div>

|

||||

|

||||

|

|

@ -18,6 +18,11 @@

|

|||

### 应用程序

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/171659527?s=400&u=39906ab3b6e2066f83046096a66a77fb3f8bb836&v=4" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/quantalogic/quantalogic">Quantalogic</a> </td>

|

||||

<td> QuantaLogic 是一个 ReAct(推理和行动)框架,用于构建高级 AI 代理。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/13600976/224d547a-6fbc-47c8-859f-aa14813e2b0f" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/chatbox/README_cn.md">Chatbox</a> </td>

|

||||

|

|

@ -43,9 +48,14 @@

|

|||

<td> <a href="https://www.librechat.ai/docs/configuration/librechat_yaml/ai_endpoints/deepseek">LibreChat</a> </td>

|

||||

<td> LibreChat 是一个可定制的开源应用程序,无缝集成了 DeepSeek,以增强人工智能交互体验 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.papersgpt.com/images/logo/favicon.ico" alt="PapersGPT" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/papersgpt/papersgpt-for-zotero">PapersGPT</a> </td>

|

||||

<td> PapersGPT是一款集成了DeepSeek及其他多种AI模型的辅助论文阅读的Zotero插件. </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/rss-translator/RSS-Translator/main/core/static/favicon.ico" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="hhttps://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/rss_translator/README_cn.md"> RSS翻译器 </a> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/rss_translator/README_cn.md"> RSS翻译器 </a> </td>

|

||||

<td> 开源、简洁、可自部署的RSS翻译器 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

|

|

@ -77,14 +87,15 @@

|

|||

<td> <a href="docs/raycast/README_cn.md">Raycast</a></td>

|

||||

<td> <a href="https://raycast.com/?via=ViGeng">Raycast</a> 是一款 macOS 生产力工具,它允许你用几个按键来控制你的工具。它支持各种扩展,包括 DeepSeek AI。</td>

|

||||

</tr>

|

||||

</tr> <td> <img src="https://niceprompt.app/favicon.ico" alt="Icon" width="64" height="auto" /> </td> <td> <a href="https://niceprompt.app">Nice Prompt</a></td> <td> <a href="https://niceprompt.app">Nice Prompt</a> 是一个结合提示工程与社交功能的平台,支持用户高效创建、分享和协作开发AI提示词。</td> </tr>

|

||||

<tr>

|

||||

<td> <img src="./docs/zotero/assets/zotero-icon.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/zotero/README_cn.md">Zotero</a></td>

|

||||

<td> <a href="https://www.zotero.org">Zotero</a> 是一款免费且易于使用的文献管理工具,旨在帮助您收集、整理、注释、引用和分享研究成果。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="./docs/Siyuan/assets/image-20250122162731-7wkftbw.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/Siyuan/README_cn.md">思源笔记</a> </td>

|

||||

<td> <img src="https://b3log.org/images/brand/siyuan-128.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/SiYuan/README_cn.md">思源笔记</a> </td>

|

||||

<td> 思源笔记是一款隐私优先的个人知识管理系统,支持完全离线使用,并提供端到端加密的数据同步功能。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

|

|

@ -112,6 +123,34 @@

|

|||

<td> <a href="https://bobtranslate.com/">Bob</a></td>

|

||||

<td> <a href="https://bobtranslate.com/">Bob</a> 是一款 macOS 平台的翻译和 OCR 软件,您可以在任何应用程序中使用 Bob 进行翻译和 OCR,即用即走!</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/ZGGSONG/STranslate/raw/main/img/favicon.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://stranslate.zggsong.com/">STranslate</a></td>

|

||||

<td> <a href="https://stranslate.zggsong.com/">STranslate</a>(Windows) 是 WPF 开发的一款即用即走的翻译、OCR工具 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.gptaiflow.tech/logo.png" alt="gpt-ai-flow-logo" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.gptaiflow.tech/zh/docs/product/api-keys-setup#setup-deepseek-api-keys">GPT AI Flow</a></td>

|

||||

<td>

|

||||

工程师为效率狂人(他们自己)打造的终极生产力武器: <a href="https://www.gptaiflow.tech/zh/">GPT AI Flow</a>

|

||||

<ul>

|

||||

<li>`Shift+Alt+空格` 唤醒桌面智能中枢</li>

|

||||

<li>本地加密存储</li>

|

||||

<li>自定义指令引擎</li>

|

||||

<li>按需调用拒绝订阅捆绑</li>

|

||||

</ul>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/b09f17a8-936d-4dac-8b24-1682d52c9a3c" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/alecm20/story-flicks">Story-Flicks</a></td>

|

||||

<td>通过一句话即可快速生成高清故事短视频,支持 DeepSeek 等模型。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.petercat.ai/images/favicon.ico" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.petercat.ai">PeterCat</a> </td>

|

||||

<td> 我们提供对话式答疑 Agent 配置系统、自托管部署方案和便捷的一体化应用 SDK,让您能够为自己的 GitHub 仓库一键创建智能答疑机器人,并快速集成到各类官网或项目中, 为社区提供更高效的技术支持生态。</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### AI Agent 框架

|

||||

|

|

@ -122,6 +161,21 @@

|

|||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/anda/README_cn.md">Anda</a> </td>

|

||||

<td>一个专为 AI 智能体开发设计的 Rust 语言框架,致力于构建高度可组合、自主运行且具备永久记忆能力的 AI 智能体网络。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><img src="https://yomo.run/yomo-logo.png" alt="Icon" width="64" height="auto" /></td>

|

||||

<td><a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/yomo/README.md">YoMo</a></td>

|

||||

<td>Stateful Serverless LLM Function Calling Framework with Strongly-typed Language Support</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://alice.fun/alice-logo.png" alt="图标" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/bob-robert-ai/bob/blob/main/alice/readme.md">Alice</a> </td>

|

||||

<td>一个基于 ICP 的自主 AI 代理,利用 DeepSeek 等大型语言模型进行链上决策。Alice 结合实时数据分析和独特的个性,管理代币、挖掘 BOB 并参与生态系统治理。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/173022229" alt="图标" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/APRO-com">ATTPs</a> </td>

|

||||

<td>一个用于Agent之间可信通信的基础协议框架,基于DeekSeek的Agent,可以接入<a href="https://docs.apro.com/attps">ATTPs</a>的SDK,获得注册Agent,发送可验证数据,获取可验证数据等功能,从而与其他平台的Agent进行可信通信。</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### RAG 框架

|

||||

|

|

@ -132,6 +186,26 @@

|

|||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/ragflow/README_cn.md"> RAGFlow </a> </td>

|

||||

<td> 一款基于深度文档理解构建的开源 RAG(Retrieval-Augmented Generation)引擎。RAGFlow 可以为各种规模的企业及个人提供一套精简的 RAG 工作流程,结合大语言模型(LLM)针对用户各类不同的复杂格式数据提供可靠的问答以及有理有据的引用。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/pingcap/tidb.ai/main/frontend/app/public/nextra/icon-dark.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/autoflow/README_cn.md"> Autoflow </a> </td>

|

||||

<td> <a href="https://github.com/pingcap/autoflow">AutoFlow</a> 是一个开源的基于 GraphRAG 的知识库工具,构建于 <a href="https://www.pingcap.com/ai?utm_source=tidb.ai&utm_medium=community">TiDB</a> Vector、LlamaIndex 和 DSPy 之上。提供类 Perplexity 的搜索页面,并可以嵌入简单的 JavaScript 代码片段,轻松将 Autoflow 的对话式搜索窗口集成到您的网站。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://assets.zilliz.com/Zilliz_Logo_Mark_White_20230223_041013_86057436cc.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/zilliztech/deep-searcher"> DeepSearcher </a> </td>

|

||||

<td> DeepSearcher 结合强大的 LLM(DeepSeek、OpenAI 等)和向量数据库(Milvus 等),根据私有数据进行搜索、评估和推理,提供高度准确的答案和全面的报告。</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### Solana 框架

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="./docs/solana-agent-kit/assets/sendai-logo.png" alt="Icon" width="128" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/ragflow/README.md"> Solana Agent Kit </a> </td>

|

||||

<td>一个用于连接 AI 智能体到 Solana 协议的开源工具包。现在,任何使用 Deepseek LLM 的智能体都可以自主执行 60+ 种 Solana 操作:</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### 即时通讯插件

|

||||

|

|

@ -143,9 +217,14 @@

|

|||

<td> 一个集成到个人微信群/飞书群的领域知识助手,专注解答问题不闲聊</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/RockChinQ/QChatGPT/blob/master/res/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/RockChinQ/QChatGPT">QChatGPT<br/>(QQ)</a> </td>

|

||||

<td> 😎高稳定性、🧩支持插件、🌏实时联网的 LLM QQ / QQ频道 / One Bot 机器人🤖</td>

|

||||

<td> <img src="https://github.com/RockChinQ/LangBot/blob/master/res/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/RockChinQ/LangBot">LangBot<br/>(QQ, 企微, 飞书)</a> </td>

|

||||

<td> 大模型原生即时通信机器人平台,适配 QQ / QQ频道 / 飞书 / OneBot / 企业微信(wecom) 等多种消息平台 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://nonebot.dev/logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/KomoriDev/nonebot-plugin-deepseek"">NoneBot<br/>(QQ, 飞书, Discord, TG, etc.)</a> </td>

|

||||

<td> 基于 NoneBot 框架,支持智能对话与深度思考功能。适配 QQ / 飞书 / Discord, TG 等多种消息平台 </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

|

|

@ -182,13 +261,33 @@

|

|||

<td> <a href="https://fluent.thinkstu.com/"> 流畅阅读 </a> </td>

|

||||

<td> 一款革新性的浏览器开源翻译插件,让所有人都能够拥有基于母语般的阅读体验 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.ncurator.com/_next/image?url=%2Ffavicon.ico&w=96&q=75" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.ncurator.com/"> 馆长 </a> </td>

|

||||

<td> 知识库AI问答助手 - 让AI帮助你整理与分析知识</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/oinzen/RSSFlow-doc/blob/main/docs/images/en/icon64.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://rssflow.oinchain.com"> RssFlow </a> </td>

|

||||

<td>一款智能的RSS阅读器浏览器扩展,具有AI驱动的RSS摘要和多维度订阅视图功能。支持配置DeepSeek模型以增强内容理解能力。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.typral.com/_next/image?url=%2Ffavicon.ico&w=96&q=75" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.typral.com/"> Typral </a> </td>

|

||||

<td>超快的AI写作助手 - 让AI帮你快速优化日报,文章,文本等等...</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://static.trancy.org/assets/trancy_logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.trancy.org/"> Trancy </a> </td>

|

||||

<td>沉浸双语对照翻译、视频双语字幕、划句/划词翻译插件</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### VS Code 插件

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/59196087/e4d082de-6f64-44b9-beaa-0de55d70cfab" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <img src="https://github.com/continuedev/continue/blob/main/docs/static/img/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/continue/README_cn.md"> Continue </a> </td>

|

||||

<td> 开源 IDE 插件,使用 LLM 做你的编程助手 </td>

|

||||

</tr>

|

||||

|

|

@ -197,13 +296,18 @@

|

|||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/cline/README.md"> Cline </a> </td>

|

||||

<td> Cline 是一款能够使用您的 CLI 和编辑器的 AI 助手。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/Sitoi/ai-commit/refs/heads/main/images/logo.png?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/Sitoi/ai-commit/blob/main/README.md"> AI Commit </a> </td>

|

||||

<td> 使用 AI 生成 git commit message 的 VS Code 插件。 </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### neovim 插件

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/d66dfc62-8e69-4b00-8549-d0158e48e2e0" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <img src="https://github.com/user-attachments/assets/c316f70a-0a3c-4a32-b148-4df15e609acc" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/avante.nvim/README_cn.md"> avante.nvim </a> </td>

|

||||

<td> 开源 IDE 插件,使用 LLM 做你的编程助手 </td>

|

||||

</tr>

|

||||

|

|

@ -212,6 +316,11 @@

|

|||

<td> <a href="docs/llm.nvim/README.md"> llm.nvim </a> </td>

|

||||

<td> 免费的大语言模型插件,让你在Neovim中与大模型交互,支持任意一款大模型,比如Deepseek,GPT,GLM,kimi或者本地运行的大模型(比如ollama) </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/d66dfc62-8e69-4b00-8549-d0158e48e2e0" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/minuet-ai.nvim/README_cn.md"> minuet-ai.nvim </a> </td>

|

||||

<td> Minuet 提供实时代码补全功能,支持多个主流大语言模型,包括 Deepseek、OpenAI、Gemini、Claude、Ollama、Codestral 等。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/user-attachments/assets/d66dfc62-8e69-4b00-8549-d0158e48e2e0" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/codecompanion.nvim/README.md"> codecompanion.nvim </a> </td>

|

||||

|

|

@ -234,9 +343,34 @@

|

|||

</tr>

|

||||

</table>

|

||||

|

||||

### AI Code编辑器

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://global.discourse-cdn.com/flex020/uploads/cursor1/original/2X/a/a4f78589d63edd61a2843306f8e11bad9590f0ca.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.cursor.com/"> Cursor </a> </td>

|

||||

<td>基于VS Code进行扩展的AI Code编辑器</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://exafunction.github.io/public/images/windsurf/windsurf-app-icon.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://codeium.com/windsurf"> WindSurf </a> </td>

|

||||

<td>另一个基于VS Code的AI Code编辑器,由Codeium出品</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### 其它

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td><p style="font-size: 84px">🐠</p></td>

|

||||

<td> <a href="https://github.com/lunary-ai/abso/blob/main/README.md"> Abso </a>

|

||||

<td> TypeScript SDK 使用 OpenAI 格式与任何 LLM 提供商进行交互。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://i.imgur.com/IsQYInJ.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/djcopley/ShellOracle/"> ShellOracle </a> </td>

|

||||

<td> 一种用于智能 shell 命令生成的终端工具。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/59196087/c1e47b01-1766-4f7e-bfe6-ab3cb3991c30" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/tree/main/docs/siri_deepseek_shortcut"> 深度求索(快捷指令) </a> </td>

|

||||

|

|

@ -252,4 +386,18 @@

|

|||

<td> <a href="docs/promptfoo/README.md"> promptfoo </a> </td>

|

||||

<td> 测试和评估LLM提示,包括DeepSeek模型。比较不同的LLM提供商,捕获回归,并评估响应。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> </td>

|

||||

<td> <a href="https://github.com/AndersonBY/deepseek-tokenizer"> deepseek-tokenizer </a> </td>

|

||||

<td> 一个高效的轻量级tokenization库,仅依赖`tokenizers`库,不依赖`transformers`等重量级依赖。 </td>

|

||||

</tr>

|

||||

<td> CR </td>

|

||||

<td> <a href="https://github.com/hustcer/deepseek-review"> deepseek-review </a> </td>

|

||||

<td> 🚀 使用 Deepseek 进行代码审核,支持 GitHub Action 和本地 🚀 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/suqicloud/wp-ai-chat/raw/main/ic_logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/suqicloud/wp-ai-chat"> WordPress ai助手 </a> </td>

|

||||

<td> 对接Deepseek api用于WordPress站点的ai对话助手、ai文章生成、ai文章总结插件。 </td>

|

||||

</tr>

|

||||

</table>

|

||||

|

|

|

|||

411

README_ja.md

Normal file

|

|

@ -0,0 +1,411 @@

|

|||

<div align="center">

|

||||

|

||||

<p align="center">

|

||||

<img width="1000px" alt="Awesome DeepSeek Integrations" src="docs/Awesome DeepSeek Integrations.png">

|

||||

</p>

|

||||

|

||||

# Awesome DeepSeek Integrations

|

||||

|

||||

DeepSeek API を人気のソフトウェアに統合します。API キーを取得するには、[DeepSeek Open Platform](https://platform.deepseek.com/)にアクセスしてください。

|

||||

|

||||

[English](https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/README.md)/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/README_cn.md)/日本語

|

||||

|

||||

</div>

|

||||

|

||||

</br>

|

||||

</br>

|

||||

|

||||

### アプリケーション

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/171659527?s=400&u=39906ab3b6e2066f83046096a66a77fb3f8bb836&v=4" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/quantalogic/quantalogic">Quantalogic</a> </td>

|

||||

<td> QuantaLogicは、高度なAIエージェントを構築するためのReAct(推論と行動)フレームワークです。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/13600976/224d547a-6fbc-47c8-859f-aa14813e2b0f" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/chatbox/README.md">Chatbox</a> </td>

|

||||

<td> Chatboxは、Windows、Mac、Linuxで利用可能な複数の最先端LLMモデルのデスクトップクライアントです。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/59196087/bb65404c-f867-42d8-ae2b-281fe953ab54" alt="Icon" width="64" height="auto"/> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/chatgpt_next_web/README.md"> ChatGPT-Next-Web </a> </td>

|

||||

<td> ChatGPT Next Webは、GPT3、GPT4、Gemini ProをサポートするクロスプラットフォームのChatGPTウェブUIです。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="./docs/liubai/assets/liubai-logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/liubai/README.md">Liubai</a> </td>

|

||||

<td> Liubaiは、WeChat上でDeepSeekを使用してノート、タスク、カレンダー、ToDoリストを操作できるようにします! </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/59196087/1ac9791b-87f7-41d9-9282-a70698344e1d" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/pal/README.md"> Pal - AI Chat Client<br/>(iOS, ipadOS) </a> </td>

|

||||

<td> Palは、iOS上でカスタマイズされたチャットプレイグラウンドです。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.librechat.ai/librechat.svg" alt="LibreChat" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.librechat.ai/docs/configuration/librechat_yaml/ai_endpoints/deepseek">LibreChat</a> </td>

|

||||

<td> LibreChatは、DeepSeekをシームレスに統合してAIインタラクションを強化するカスタマイズ可能なオープンソースアプリです。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/rss-translator/RSS-Translator/main/core/static/favicon.ico" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/rss_translator/README.md"> RSS Translator </a> </td>

|

||||

<td> RSSフィードをあなたの言語に翻訳します! </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/ysnows/enconvo_media/main/logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/enconvo/README.md"> Enconvo </a> </td>

|

||||

<td> Enconvoは、AI時代のランチャーであり、すべてのAI機能のエントリーポイントであり、思いやりのあるインテリジェントアシスタントです。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><img src="https://github.com/kangfenmao/cherry-studio/blob/main/src/renderer/src/assets/images/logo.png?raw=true" alt="Icon" width="64" height="auto" style="border-radius: 10px" /></td>

|

||||

<td><a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/cherrystudio/README.md">Cherry Studio</a></td>

|

||||

<td>プロデューサーのための強力なデスクトップAIアシスタント</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://tomemo.top/images/logo.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/tomemo/README.md"> ToMemo (iOS, ipadOS) </a> </td>

|

||||

<td> フレーズブック+クリップボード履歴+キーボードiOSアプリで、キーボードでの迅速な出力にAIマクロモデリングを統合しています。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/buxuku/video-subtitle-master/refs/heads/main/resources/icon.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/buxuku/video-subtitle-master">Video Subtitle Master</a></td>

|

||||

<td> ビデオの字幕を一括生成し、字幕を他の言語に翻訳することができます。これはクライアントサイドのツールで、MacとWindowsの両方のプラットフォームをサポートし、Baidu、Volcengine、DeepLx、OpenAI、DeepSeek、Ollamaなどの複数の翻訳サービスと統合されています。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/UnknownEnergy/chatgpt-api/blob/master/dist/assets/chatworm-72x72.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/UnknownEnergy/chatgpt-api/blob/master/README.md">Chatworm</a> </td>

|

||||

<td> Chatwormは、複数の最先端LLMモデルのためのウェブアプリで、オープンソースであり、Androidでも利用可能です。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/tisfeng/ImageBed/main/uPic/icon_512x512@2x.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/tisfeng/Easydict">Easydict</a></td>

|

||||

<td> Easydictは、単語の検索やテキストの翻訳を簡単かつエレガントに行うことができる、簡潔で使いやすい翻訳辞書macOSアプリです。大規模言語モデルAPIを呼び出して翻訳を行うことができます。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.raycast.com/favicon-production.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/raycast/README.md">Raycast</a></td>

|

||||

<td> <a href="https://raycast.com/?via=ViGeng">Raycast</a>は、macOSの生産性ツールで、いくつかのキーストロークでツールを制御できます。DeepSeek AIを含むさまざまな拡張機能をサポートしています。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/193405629?s=200&v=4" alt="PHP Client" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-php/deepseek-php-client/blob/master/README.md">PHP Client</a> </td>

|

||||

<td> Deepseek PHP Clientは、Deepseek APIとのシームレスな統合のための堅牢でコミュニティ主導のPHPクライアントライブラリです。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/958072?s=200&v=4" alt="Laravel Integration" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-php/deepseek-laravel/blob/master/README.md">Laravel Integration</a> </td>

|

||||

<td> LaravelアプリケーションとのシームレスなDeepseek API統合のためのLaravelラッパー。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="./docs/zotero/assets/zotero-icon.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/zotero/README.md">Zotero</a></td>

|

||||

<td> <a href="https://www.zotero.org">Zotero</a>は、研究成果を収集、整理、注釈、引用、共有するのに役立つ無料で使いやすいツールです。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://b3log.org/images/brand/siyuan-128.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/SiYuan/README.md">SiYuan</a> </td>

|

||||

<td> SiYuanは、完全にオフラインで使用できるプライバシー優先の個人知識管理システムであり、エンドツーエンドの暗号化データ同期を提供します。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/ArvinLovegood/go-stock/raw/master/build/appicon.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/ArvinLovegood/go-stock/blob/master/README.md">go-stock</a> </td>

|

||||

<td>go-stockは、Wailsを使用してNativeUIで構築され、LLMによって強化された中国株データビューアです。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/102771702?s=200&v=4" alt="Wordware" width="64" height="auto" /> </td>

|

||||

<td> <a href="docs/wordware/README.md">Wordware</a> </td>

|

||||

<td><a href="https://www.wordware.ai/">Wordware</a>は、誰でも自然言語だけでAIスタックを構築、反復、デプロイできるツールキットです。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://framerusercontent.com/images/xRJ6vNo9mUYeVNxt0KITXCXEuSk.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/langgenius/dify/">Dify</a> </td>

|

||||

<td> <a href="https://dify.ai/">Dify</a>は、アシスタント、ワークフロー、テキストジェネレーターなどのアプリケーションを作成するためのDeepSeekモデルをサポートするLLMアプリケーション開発プラットフォームです。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/enricoros/big-AGI/refs/heads/v2-dev/public/favicon.ico" alt="Big-AGI" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/enricoros/big-AGI/blob/v2-dev/README.md">Big-AGI</a> </td>

|

||||

<td><a href="https://big-agi.com/">Big-AGI</a>は、誰もが高度な人工知能にアクセスできるようにするための画期的なAIスイートです。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/LiberSonora/LiberSonora/blob/main/assets/avatar.jpeg?raw=true" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/LiberSonora/LiberSonora/blob/main/README_en.md">LiberSonora</a> </td>

|

||||

<td> LiberSonoraは、「自由の声」を意味し、AIによって強化された強力なオープンソースのオーディオブックツールキットであり、インテリジェントな字幕抽出、AIタイトル生成、多言語翻訳などの機能を備え、GPUアクセラレーションとバッチオフライン処理をサポートしています。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/ripperhe/Bob/master/docs/_media/icon_128.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://bobtranslate.com/">Bob</a></td>

|

||||

<td> <a href="https://bobtranslate.com/">Bob</a>は、任意のアプリで使用できるmacOSの翻訳およびOCRツールです。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://agenticflow.ai/favicon.ico" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://agenticflow.ai/">AgenticFlow</a> </td>

|

||||

<td> <a href="https://agenticflow.ai/">AgenticFlow</a>は、マーケターがAIエージェントのためのエージェンティックAIワークフローを構築するためのノーコードプラットフォームであり、数百の毎日のアプリをツールとして使用します。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://www.petercat.ai/images/favicon.ico" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://www.petercat.ai">PeterCat</a> </td>

|

||||

<td> 会話型Q&Aエージェントの構成システム、自ホスト型デプロイメントソリューション、および便利なオールインワンアプリケーションSDKを提供し、GitHubリポジトリのためのインテリジェントQ&Aボットをワンクリックで作成し、さまざまな公式ウェブサイトやプロジェクトに迅速に統合し、コミュニティのためのより効率的な技術サポートエコシステムを提供します。</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### AI エージェントフレームワーク

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://panda.fans/_assets/favicons/apple-touch-icon.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/anda/README.md">Anda</a> </td>

|

||||

<td>高度にコンポーザブルで自律的かつ永続的な記憶を持つAIエージェントネットワークを構築するために設計されたRustフレームワーク。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://avatars.githubusercontent.com/u/173022229" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/APRO-com">ATTPs</a> </td>

|

||||

<td>エージェント間の信頼できる通信のための基本プロトコルフレームワークです。利用者は<a href="https://docs.apro.com/attps">ATTPs</a>のSDKを導入することで、エージェントの登録、検証可能なデータの送信、検証可能なデータの取得などの機能を利用することができます。</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### RAG フレームワーク

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/deepseek-ai/awesome-deepseek-integration/assets/33142505/77093e84-9f7c-4716-9168-bac962fa1372" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/ragflow/README.md"> RAGFlow </a> </td>

|

||||

<td> 深い文書理解に基づいたオープンソースのRAG(Retrieval-Augmented Generation)エンジン。RAGFlowは、あらゆる規模の企業や個人に対して、ユーザーのさまざまな複雑な形式のデータに対して信頼性のある質問応答と根拠のある引用を提供するための簡素化されたRAGワークフローを提供します。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://raw.githubusercontent.com/pingcap/tidb.ai/main/frontend/app/public/nextra/icon-dark.svg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/autoflow/README.md"> Autoflow </a> </td>

|

||||

<td> <a href="https://github.com/pingcap/autoflow">AutoFlow</a> は、GraphRAGに基づくオープンソースのナレッジベースツールであり、<a href="https://www.pingcap.com/ai?utm_source=tidb.ai&utm_medium=community">TiDB</a> Vector、LlamaIndex、DSPy の上に構築されています。Perplexity のような検索インターフェースを提供し、シンプルな JavaScript スニペットを埋め込むことで、AutoFlow の対話型検索ウィンドウを簡単にウェブサイトに統合できます。 </td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td> <img src="https://assets.zilliz.com/Zilliz_Logo_Mark_White_20230223_041013_86057436cc.png" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/zilliztech/deep-searcher"> DeepSearcher </a> </td>

|

||||

<td> DeepSearcher は、強力な大規模言語モデル(DeepSeek、OpenAI など)とベクトルデータベース(Milvus など)を組み合わせて、私有データに基づく検索、評価、推論を行い、高精度な回答と包括的なレポートを提供します。</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### Solana フレームワーク

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="./docs/solana-agent-kit/assets/sendai-logo.png" alt="Icon" width="128" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/ragflow/README.md"> Solana Agent Kit </a> </td>

|

||||

<td>AIエージェントをSolanaプロトコルに接続するためのオープンソースツールキット。DeepSeek LLMを使用する任意のエージェントが、60以上のSolanaアクションを自律的に実行できます。</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### IM アプリケーションプラグイン

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td> <img src="https://github.com/InternLM/HuixiangDou/releases/download/v0.1.0rc1/huixiangdou.jpg" alt="Icon" width="64" height="auto" /> </td>

|

||||

<td> <a href="https://github.com/deepseek-ai/awesome-deepseek-integration/blob/main/docs/huixiangdou/README_cn.md">HuixiangDou<br/>(wechat,lark)</a> </td>

|

||||