+

+  |

+ 4EVERChat |

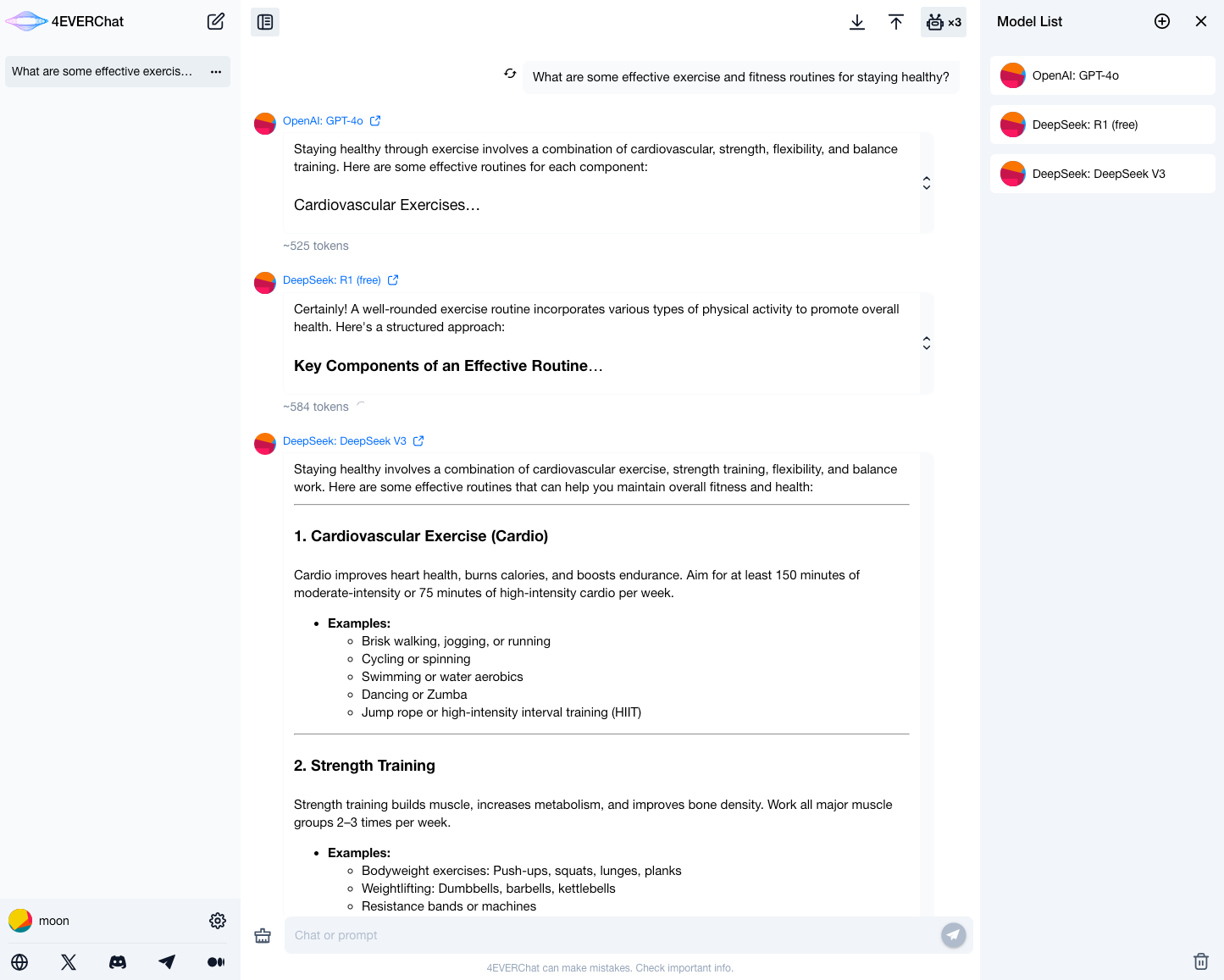

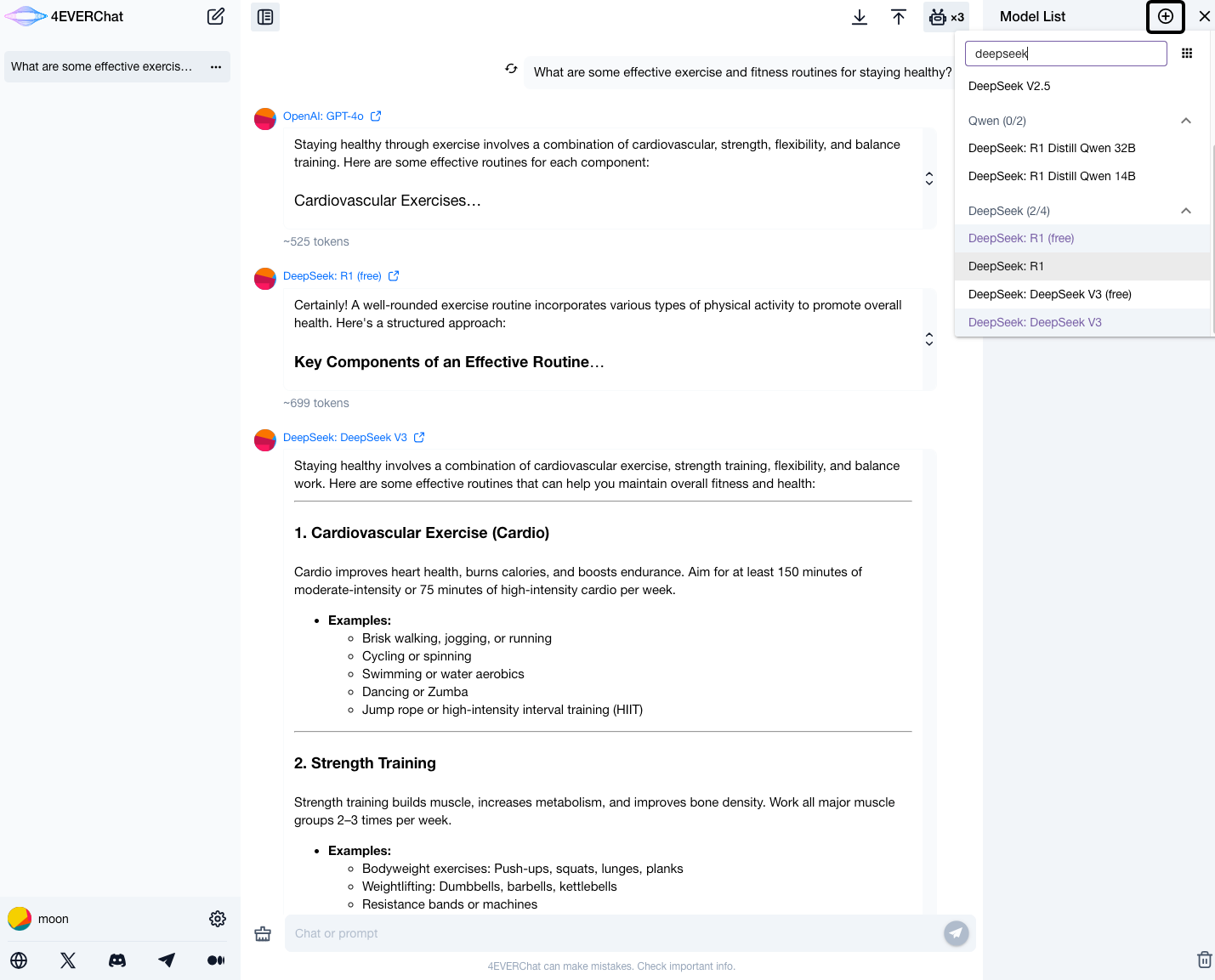

+ 4EVERChat is an intelligent model selection platform integrating hundreds of LLMs, enabling real-time comparison of model performance. Leveraging 4EVERLAND AI RPC's unified API endpoint, it achieves cost-free model switching and automatically selects combinations with fast responses and low costs. |

+

+

+  |

+ xhai Browser |

+ xhai Browser is an Android desktop management & AI browser, DeepSeek is the default AI dialog engine.It has the ultimate performance (0.2 seconds to start), slim size (apk 3M), no ads, ultra-fast ad blocking, multi-screen classification, screen navigation, multi-search box, a box multiple search! |

+

+

+  |

+ IntelliBar |

+ IntelliBar is a beautiful assistant for the Mac that lets you use advanced models like DeepSeek R1 with any app on your Mac — ex: edit emails in your mail app or summarize articles in your browser. |

+

+

+  |

+ DeepChat |

+ DeepChat is a fully free desktop smart assistant, with a powerful DeepSeek large model, supporting multi-round conversations, internet search, file uploads, knowledge bases, and more. |

+

+

+  |

+ Quantalogic |

+ QuantaLogic is a ReAct (Reasoning & Action) framework for building advanced AI agents. |

+

|

Chatbox |

@@ -28,6 +54,11 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

ChatGPT-Next-Web |

ChatGPT Next Web is a cross-platform ChatGPT web UI, with GPT3, GPT4 & Gemini Pro support. |

+

+  |

+ Coco AI |

+ Coco AI is a fully open-source, cross-platform unified search and productivity tool that connects and searches across various data sources, including applications, files, Google Drive, Notion, Yuque, Hugo, and more, both local and cloud-based. By integrating with large models like DeepSeek, Coco AI enables intelligent personal knowledge management, emphasizing privacy and supporting private deployment, helping users quickly and intelligently access their information. |

+

|

Liubai |

@@ -42,6 +73,16 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

LibreChat |

LibreChat is a customizable open-source app that seamlessly integrates DeepSeek for enhanced AI interactions. |

+

+

+  |

+ Just-Chat |

+ Make your LLM agent and chat with it simple and fast! |

+

+

+  |

+ PapersGPT |

+ PapersGPT is a Zotero plugin that seamlessly with DeepSeek and other multiple AI models for quickly reading papers in Zotero. |

|

@@ -83,12 +124,31 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

Raycast |

Raycast is a productivity tool for macOS that lets you control your tools with a few keystrokes. It supports various extensions including DeepSeek AI. |

+

+  | Nice Prompt | Nice Prompt Organize, share and use your prompts in your code editor, with Cursor and VSCode。 |

+

|

PHP Client |

Deepseek PHP Client is a robust and community-driven PHP client library for seamless integration with the Deepseek API. |

-

+

+

+  +

+ |

+

+ DeepSwiftSeek

+ |

+

+ DeepSwiftSeek is a lightweight yet powerful Swift client library, pretty good integration with the DeepSeek API.

+ It provides easy-to-use Swift concurrency for chat, streaming, FIM (Fill-in-the-Middle) completions, and more.

+ |

+

|

Laravel Integration |

Laravel wrapper for Deepseek PHP client, to seamless deepseek API integration with laravel applications. |

@@ -96,11 +156,11 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

|

Zotero |

- Zotero is a free, easy-to-use tool to help you collect, organize, annotate, cite, and share research. |

+ Zotero is a free, easy-to-use tool to help you collect, organize, annotate, cite, and share research. It can use deepseek as translation service. |

-  |

- SiYuan |

+  |

+ SiYuan |

SiYuan is a privacy-first personal knowledge management system that supports complete offline usage, as well as end-to-end encrypted data sync. |

@@ -139,30 +199,241 @@ English/[简体中文](https://github.com/deepseek-ai/awesome-deepseek-integrati

| AgenticFlow is a no-code platform where marketers build agentic AI workflows for go-to-market automation, powered by hundreds of everyday apps as tools for your AI agents. |

-  |

- ReadLecture |

- ReadLecture is an AI assistant designed to summarize audio and video content. It effortlessly transforms audio and video into accurate text and image formats. It also supports a variety of AI-driven learning methods, including PPT extraction, podcast summaries, mind mapping, translation, and meeting notes. |

+  |

+ AIhaoji |

+ AIhaoji is an AI assistant designed to summarize audio and video content. It effortlessly transforms audio and video into accurate text and image formats. It also supports a variety of AI-driven learning methods, including PPT extraction, podcast summaries, mind mapping, translation, and meeting notes. |

+

+  |

+ STranslate |

+ STranslate(Windows) is a ready-to-go translation ocr tool developed by WPF |

+

+

+  |

+ ASP Client |

+ Deepseek.ASPClient is a lightweight ASP.NET wrapper for the Deepseek AI API, designed to simplify AI-driven text processing in .NET applications.. |

+

+

+  |

+ GPT AI Flow |

+

+ The ultimate productivity weapon built by engineers for efficiency enthusiasts (themselves): GPT AI Flow

+

+ - `Shift+Alt+Space` Wake up desktop intelligent hub

+ - Local encrypted storage

+ - Custom instruction engine

+ - On-demand calling without subscription bundling

+

+ |

+

+

+  |

+ Story-Flicks |

+ With just one sentence, you can quickly generate high-definition story short videos, supporting models such as DeepSeek. |

+

+

+  |

+ 16x Prompt |

+ 16x Prompt is an AI coding tool with context management. It helps developers manage source code context and craft prompts for complex coding tasks on existing codebases. |

+

+

+  |

+ Alpha Pai |

+ AI Research Assistant / The Next-Generation Financial Information Portal Driven by AI.

Proxy for investors to attend meetings and take notes, as well as providing search and Q&A services for financial information and quantitative analysis for investment research. |

+

+  |

+ argo |

+ Locally download and run Ollama and Huggingface models with RAG on Mac/Windows/Linux. Support LLM API too. |

+

+

+  |

+ PeterCat |

+ A conversational Q&A agent configuration system, self-hosted deployment solutions, and a convenient all-in-one application SDK, allowing you to create intelligent Q&A bots for your GitHub repositories. |

+

+

+  |

+ FastGPT |

+

+ FastGPT is an open-source AI knowledge base platform built on large language models (LLMs), supporting various models including DeepSeek and OpenAI. We provide out-of-the-box capabilities for data processing, model invocation, RAG retrieval, and visual AI workflow orchestration, enabling you to effortlessly build sophisticated AI applications.

+ |

+

+

+  |

+ RuZhi AI Notes |

+ RuZhi AI Notes is an intelligent knowledge management tool powered by AI, providing one-stop knowledge management and application services including AI search & exploration, AI results to notes conversion, note management & organization, knowledge presentation & sharing. Integrated with DeepSeek model to provide more stable and higher quality outputs. |

+

+

+  |

+ Chatgpt-on-Wechat |

+ Chatgpt-on-Wechat(CoW) is a flexible chatbot framework that supports seamless integration of multiple LLMs, including DeepSeek, OpenAI, Claude, Qwen, and others, into commonly used platforms or office software such as WeChat Official Accounts, WeCom, Feishu, DingTalk, and websites. It also supports a wide range of custom plugins. |

+

+

+  |

+ Athena |

+ The world's first autonomous general AI with advanced cognitive architecture and human-like reasoning capabilities, designed to tackle complex real-world challenges. |

+

+

+  |

+ MaxKB |

+ MaxKB is a ready-to-use, flexible RAG Chatbot. |

+

+

+  |

+ TigerGPT |

+ TigerGPT is the first financial AI investment assistant of its kind based on OpenAI, developed by Tiger Group. TigerGPT aims to provide intelligent investment decision-making support for investors. On February 18, 2025, TigerGPT officially integrated the DeepSeek-R1 model to provide users with online Q&A services that support deep reasoning. |

+

+

+  |

+ HIX.AI |

+ Try DeepSeek for free and enjoy unlimited AI chat on HIX.AI. Use DeepSeek R1 for AI chat, writing, coding & more. Experience next-gen AI chat now! |

+

+

+  |

+ Askanywhere |

+ Select text anywhere and start a conversation with Deepseek |

+

+

+  |

+ 1chat |

+ An iOS app that lets you chat with the DeepSeek-R1 model locally. |

+

+

+  |

+ Access 250+ text, image LLMs in one app |

+ 1AI iOS Chatbot integrates with 250+ text, image, voice models allowing users chat with any model in the world including Deepseek R1 and Deepseek V3 models. |

+

+

+  |

+ PopAi |

+ PopAi launches DeepSeek R1! Enjoy lag-free, lightning-fast performance with PopAi. Seamlessly toggle online search on/off. |

+

### AI Agent frameworks

+

+  |

+ smolagents |

+ The simplest way to build great agents. Agents write python code to call tools and orchestrate other agents. Priority support for open models like DeepSeek-R1! |

+

+

+  |

+ YoMo |

+ Stateful Serverless LLM Function Calling Framework with Strongly-typed Language Support |

+

+

+  |

+ SuperAgentX |

+ SuperAgentX: A Lightweight Open Source AI Framework Built for Autonomous Multi-Agent Applications with Artificial General Intelligence (AGI) Capabilities. |

+

|

Anda |

A Rust framework for AI agent development, designed to build a highly composable, autonomous, and perpetually memorizing network of AI agents. |

+

+  |

+ RIG |

+ Build modular and scalable LLM Applications in Rust. |

+

+

+  |

+ Just-Agents |

+ A lightweight, straightforward library for LLM agents - no over-engineering, just simplicity! |

+

+

+  |

+ Alice |

+ An autonomous AI agent on ICP, leveraging LLMs like DeepSeek for on-chain decision-making. Alice combines real-time data analysis with a playful personality to manage tokens, mine BOB, and govern ecosystems. |

+

+

+  |

+ Upsonic |

+ Upsonic offers a cutting-edge enterprise-ready agent framework where you can orchestrate LLM calls, agents, and computer use to complete tasks cost-effectively. |

+

+

+  |

+ ATTPs |

+ A foundational protocol framework for trusted communication between agents. Any agents based on DeepSeek, By integrating with the ATTPs SDK, can access features such as agent registration, sending verifiable data, and retrieving verifiable data. So that it can make trusted communication with agents from other platforms. |

+

+

+  |

+ translate.js |

+ AI i18n for front-end developers. It can achieve fully automatic HTML translation with just two lines of JavaScript. You can switch among dozens of languages with a single click. There is no need to modify the page, no language configuration files are required, and it supports dozens of fine-tuning extension instructions. It is SEO-friendly. Moreover, it opens up a standard text translation API interface. |

+

+

+  |

+ agentUniverse |

+ agentUniverse is a multi-agent collaboration framework designed for complex business scenarios. It offers rapid and user-friendly development capabilities for LLM agent applications, with a focus on mechanisms such as agent collaborative scheduling, autonomous decision-making, and dynamic feedback. The framework originates from Ant Group's real-world business practices in the financial industry. In June 2024, agentUniverse achieved full integration support for the DeepSeek series of models. |

+

### RAG frameworks

+

+  |

+ 4EVERChat |

+ 4EVERChat 是集成数百款LLM的智能模型选型平台,支持直接对比不同模型的实时响应差异,基于4EVERLAND AI RPC 统一API端点实现零成本模型切换,自动选择响应快、成本低的模型组合。 |

+

+

+  |

+ 小海浏览器 |

+ 小海浏览器是安卓桌面管理&AI浏览器,DeepSeek是默认AI对话引擎.他有极致的性能(0.2秒启动),苗条的体型(apk 3M大),无广告,超高速广告拦截,多屏分类,屏幕导航,多搜索框,一框多搜 |

+

+

+  |

+ DeepChat |

+ DeepChat 是一款完全免费的桌面端智能助手,内置强大的 DeepSeek 大模型,支持多轮对话、联网搜索、文件上传、知识库等多种功能。 |

+

+

+ | 🤖 |

+ Wechat-Bot |

+ 基于 wechaty 实现的微信机器人,结合了 DeepSeek 和其他 Ai 服务。 |

+

+

+  |

+ Quantalogic |

+ QuantaLogic 是一个 ReAct(推理和行动)框架,用于构建高级 AI 代理。 |

+

|

Chatbox |

@@ -29,7 +54,12 @@

一键获取跨平台ChatGPT网页用户界面,支持流行的LLM |

-  |

+  |

+ Coco AI |

+ Coco AI 是一个完全开源、跨平台的统一搜索与效率工具,能够连接并搜索多种数据源,包括应用程序、文件、谷歌网盘、Notion、语雀、Hugo 等本地与云端数据。通过接入 DeepSeek 等大模型,Coco AI 实现了智能化的个人知识库管理,注重隐私,支持私有部署,帮助用户快速、智能地访问信息。 |

+

+

+  |

留白记事 |

留白让你直接在微信上使用 DeepSeek 管理你的笔记、任务、日程和待办清单! |

@@ -43,59 +73,65 @@

LibreChat |

LibreChat 是一个可定制的开源应用程序,无缝集成了 DeepSeek,以增强人工智能交互体验 |

+

+  |

+ PapersGPT |

+ PapersGPT是一款集成了DeepSeek及其他多种AI模型的辅助论文阅读的Zotero插件。 |

+

|

- RSS翻译器 |

+ RSS翻译器 |

开源、简洁、可自部署的RSS翻译器 |

|

Enconvo |

- Enconvo是AI时代的启动器,是所有AI功能的入口,也是一位体贴的智能助理. |

+ Enconvo是AI时代的启动器,是所有AI功能的入口,也是一位体贴的智能助理。 |

|

Cherry Studio |

- 一款为创造者而生的桌面版 AI 助手 |

+ 一款为创造者而生的桌面版 AI 助手 |

+

|

ToMemo (iOS, ipadOS) |

- 一款短语合集 + 剪切板历史 + 键盘输出的iOS应用,集成了AI大模型,可以在键盘中快速输出使用。 |

-

+ 一款短语合集 + 剪切板历史 + 键盘输出的iOS应用,集成了AI大模型,可以在键盘中快速输出使用。 |

+

|

Video Subtitle Master |

- 批量为视频生成字幕,并可将字幕翻译成其它语言。这是一个客户端工具, 跨平台支持 mac 和 windows 系统, 支持百度,火山,deeplx, openai, deepseek, ollama 等多个翻译服务 |

-

+ 批量为视频生成字幕,并可将字幕翻译成其它语言。这是一个客户端工具, 跨平台支持 mac 和 windows 系统, 支持百度,火山,deeplx, openai, deepseek, ollama 等多个翻译服务 |

+

|

Easydict |

- Easydict 是一个简洁易用的词典翻译 macOS App,能够轻松优雅地查找单词或翻译文本,支持调用大语言模型 API 翻译。 |

-

+ Easydict 是一个简洁易用的词典翻译 macOS App,能够轻松优雅地查找单词或翻译文本,支持调用大语言模型 API 翻译。 |

+

|

Raycast |

- Raycast 是一款 macOS 生产力工具,它允许你用几个按键来控制你的工具。它支持各种扩展,包括 DeepSeek AI。 |

+ Raycast 是一款 macOS 生产力工具,它允许你用几个按键来控制你的工具。它支持各种扩展,包括 DeepSeek AI。 |

-  |

+  |

Zotero |

- Zotero 是一款免费且易于使用的文献管理工具,旨在帮助您收集、整理、注释、引用和分享研究成果。 |

+ Zotero 是一款免费且易于使用的文献管理工具,旨在帮助您收集、整理、注释、引用和分享研究成果。 |

-  |

- 思源笔记 |

- 思源笔记是一款隐私优先的个人知识管理系统,支持完全离线使用,并提供端到端加密的数据同步功能。 |

+  |

+ 思源笔记 |

+ 思源笔记是一款隐私优先的个人知识管理系统,支持完全离线使用,并提供端到端加密的数据同步功能。 |

|

go-stock |

- go-stock 是一个由 Wails 使用 NativeUI 构建并由 LLM 提供支持的股票数据查看分析器。 |

+ go-stock 是一个由 Wails 使用 NativeUI 构建并由 LLM 提供支持的股票数据查看分析器。 |

|

Wordware |

- Wordware 这是一个工具包,使任何人都可以仅通过自然语言构建、迭代和部署他们的AI堆栈 |

+ Wordware 这是一个工具包,使任何人都可以仅通过自然语言构建、迭代和部署他们的AI堆栈 |

|

@@ -105,12 +141,102 @@

|

LiberSonora |

- LiberSonora,寓意"自由的声音",是一个 AI 赋能的、强大的、开源有声书工具集,包含智能字幕提取、AI标题生成、多语言翻译等功能,支持 GPU 加速、批量离线处理 |

+ LiberSonora,寓意"自由的声音",是一个 AI 赋能的、强大的、开源有声书工具集,包含智能字幕提取、AI标题生成、多语言翻译等功能,支持 GPU 加速、批量离线处理 |

|

Bob |

- Bob 是一款 macOS 平台的翻译和 OCR 软件,您可以在任何应用程序中使用 Bob 进行翻译和 OCR,即用即走! |

+ Bob 是一款 macOS 平台的翻译和 OCR 软件,您可以在任何应用程序中使用 Bob 进行翻译和 OCR,即用即走! |

+

+

+  |

+ STranslate |

+ STranslate(Windows) 是 WPF 开发的一款即用即走的翻译、OCR工具 |

+

+

+  |

+ GPT AI Flow |

+

+ 工程师为效率狂人(他们自己)打造的终极生产力武器: GPT AI Flow

+

+ - `Shift+Alt+空格` 唤醒桌面智能中枢

+ - 本地加密存储

+ - 自定义指令引擎

+ - 按需调用拒绝订阅捆绑

+

+ |

+

+

+  |

+ Story-Flicks |

+ 通过一句话即可快速生成高清故事短视频,支持 DeepSeek 等模型。 |

+

+

+  |

+ Alpha派 |

+ AI投研助理/AI驱动的新一代金融信息入口。代理投资者听会/记纪要,金融投资信息的搜索问答/定量分析等投资研究工作。 |

+

+

+  |

+ argo |

+ 本地下载并运行Huggingface及Ollama模型,支持RAG、LLM API、工具接入等,支持Mac/Windows/Linux。 |

+

+

+  |

+ PeterCat |

+ 我们提供对话式答疑 Agent 配置系统、自托管部署方案和便捷的一体化应用 SDK,让您能够为自己的 GitHub 仓库一键创建智能答疑机器人,并快速集成到各类官网或项目中, 为社区提供更高效的技术支持生态。 |

+

+

+  |

+ FastGPT |

+

+ FastGPT 基于 LLM 大模型的开源 AI 知识库构建平台,支持 DeepSeek、OpenAI 等多种模型。我们提供了开箱即用的数据处理、模型调用、RAG 检索、可视化 AI 工作流编排等能力,帮助您轻松构建复杂的 AI 应用。

+ |

+

+

+  |

+ Chatgpt-on-Wechat |

+ Chatgpt-on-Wechat(CoW)项目是一个灵活的聊天机器人框架,支持将DeepSeek、OpenAI、Claude、Qwen等多种LLM 一键接入到微信公众号、企业微信、飞书、钉钉、网站等常用平台或办公软件,并支持丰富的自定义插件。 |

+

+

+  |

+ 如知AI笔记 |

+ 如知AI笔记是一款智能化的AI知识管理工具,致力于为用户提供一站式的知识管理和应用服务,包括AI搜索探索、AI结果转笔记、笔记管理与整理、知识演示与分享等。集成了DeepSeek深度思考模型,提供更稳定、更高质量的输出。 |

+

+

+  |

+ Athena |

+ 世界上首个具有先进认知架构和类人推理能力的自主通用人工智能,旨在解决复杂的现实世界挑战。 |

+

+

+  |

+ TigerGPT |

+ TigerGPT 是老虎集团开发的,业内首个基于 OpenAI 的金融 AI 投资助理。TigerGPT 旨在为投资者提供智能化的投资决策支持。2025年2月18日,TigerGPT 正式接入 DeepSeek-R1 模型,为用户提供支持深度推理的在线问答服务。 |

+

+

+  |

+ HIX.AI |

+ 免费试用 DeepSeek,在 HIX.AI 上享受无限量的 AI 聊天。使用 DeepSeek R1 进行 AI 聊天、写作、编码等。立即体验下一代 AI 聊天! |

+

+

+  |

+ 划词AI助手 |

+ 一个划词ai助手,在任何地方划词,快速打开与Deepseek的对话! |

+

+

+  |

+ 1查 |

+ 一款能让你在本地运行 DeepSeek 的 iOS 应用 |

+

+

+  |

+ 在一个应用中访问250多个文本、图像大模型 |

+ 1AI iOS聊天机器人集成了250多个文本、图像、语音模型,让用户可以与OpenRouter、Replicate上的任何模型对话,包括Deepseek推理和Deepseek V3模型。 |

+

+

+  |

+ PopAi |

+ PopAi推出DeepSeek R1!享受无延迟、闪电般快速的性能,尽在PopAi。轻松切换在线搜索开/关。 |

|

@@ -119,16 +245,43 @@

+

### AI Agent 框架

+

+  |

+ 4EVERChat |

+ 4EVERChatは、数百のLLMを統合したインテリジェントなモデル選択プラットフォームで、モデルのパフォーマンスをリアルタイムで比較可能です。4EVERLAND AI RPCの統一APIエンドポイントを活用し、コストフリーでモデル切り替えを実現し、応答が速くコストの低い組み合わせを自動的に選択します。 |

+

+

+  |

+ DeepChat |

+ DeepChat は、強力な DeepSeek モデルを内蔵した完全に無料のデスクトップ インテリジェント アシスタントです。複数ラウンドの会話、オンライン検索、ファイルのアップロード、ナレッジ ベースなどの複数の機能をサポートします。 |

+

+

+  |

+ Quantalogic |

+ QuantaLogicは、高度なAIエージェントを構築するためのReAct(推論と行動)フレームワークです。 |

+

+

+  |

+ Chatbox |

+ Chatboxは、Windows、Mac、Linuxで利用可能な複数の最先端LLMモデルのデスクトップクライアントです。 |

+

+

+  |

+ ChatGPT-Next-Web |

+ ChatGPT Next Webは、GPT3、GPT4、Gemini ProをサポートするクロスプラットフォームのChatGPTウェブUIです。 |

+

+

+  |

+ Coco AI |

+ Coco AI は、完全にオープンソースでクロスプラットフォーム対応の統合検索および生産性向上ツールで、アプリケーション、ファイル、Google Drive、Notion、Yuque、Hugoなど、ローカルおよびクラウドのさまざまなデータソースを接続して検索できます。DeepSeekなどの大規模モデルと連携することにより、Coco AIはインテリジェントな個人のナレッジ管理を実現し、プライバシーを重視し、プライベートなデプロイにも対応。ユーザーが情報に迅速かつインテリジェントにアクセスできるようサポートします。 |

+

+

+  |

+ Liubai |

+ Liubaiは、WeChat上でDeepSeekを使用してノート、タスク、カレンダー、ToDoリストを操作できるようにします! |

+

+

+  |

+ Pal - AI Chat Client

(iOS, ipadOS) |

+ Palは、iOS上でカスタマイズされたチャットプレイグラウンドです。 |

+

+

+  |

+ LibreChat |

+ LibreChatは、DeepSeekをシームレスに統合してAIインタラクションを強化するカスタマイズ可能なオープンソースアプリです。 |

+

+

+  |

+ RSS Translator |

+ RSSフィードをあなたの言語に翻訳します! |

+

+

+  |

+ Enconvo |

+ Enconvoは、AI時代のランチャーであり、すべてのAI機能のエントリーポイントであり、思いやりのあるインテリジェントアシスタントです。 |

+

+

+  |

+ Cherry Studio |

+ プロデューサーのための強力なデスクトップAIアシスタント |

+

+

+  |

+ ToMemo (iOS, ipadOS) |

+ フレーズブック+クリップボード履歴+キーボードiOSアプリで、キーボードでの迅速な出力にAIマクロモデリングを統合しています。 |

+

+

+  |

+ Video Subtitle Master |

+ ビデオの字幕を一括生成し、字幕を他の言語に翻訳することができます。これはクライアントサイドのツールで、MacとWindowsの両方のプラットフォームをサポートし、Baidu、Volcengine、DeepLx、OpenAI、DeepSeek、Ollamaなどの複数の翻訳サービスと統合されています。 |

+

+

+  |

+ Chatworm |

+ Chatwormは、複数の最先端LLMモデルのためのウェブアプリで、オープンソースであり、Androidでも利用可能です。 |

+

+

+  |

+ Easydict |

+ Easydictは、単語の検索やテキストの翻訳を簡単かつエレガントに行うことができる、簡潔で使いやすい翻訳辞書macOSアプリです。大規模言語モデルAPIを呼び出して翻訳を行うことができます。 |

+

+

+  |

+ Raycast |

+ Raycastは、macOSの生産性ツールで、いくつかのキーストロークでツールを制御できます。DeepSeek AIを含むさまざまな拡張機能をサポートしています。 |

+

+

+  |

+ PHP Client |

+ Deepseek PHP Clientは、Deepseek APIとのシームレスな統合のための堅牢でコミュニティ主導のPHPクライアントライブラリです。 |

+

+

+  |

+ Laravel Integration |

+ LaravelアプリケーションとのシームレスなDeepseek API統合のためのLaravelラッパー。 |

+

+

+  |

+ Zotero |

+ Zoteroは、研究成果を収集、整理、注釈、引用、共有するのに役立つ無料で使いやすいツールです。 |

+

+

+  |

+ SiYuan |

+ SiYuanは、完全にオフラインで使用できるプライバシー優先の個人知識管理システムであり、エンドツーエンドの暗号化データ同期を提供します。 |

+

+

+  |

+ go-stock |

+ go-stockは、Wailsを使用してNativeUIで構築され、LLMによって強化された中国株データビューアです。 |

+

+

+  |

+ Wordware |

+ Wordwareは、誰でも自然言語だけでAIスタックを構築、反復、デプロイできるツールキットです。 |

+

+

+  |

+ Dify |

+ Difyは、アシスタント、ワークフロー、テキストジェネレーターなどのアプリケーションを作成するためのDeepSeekモデルをサポートするLLMアプリケーション開発プラットフォームです。 |

+

+

+  |

+ Big-AGI |

+ Big-AGIは、誰もが高度な人工知能にアクセスできるようにするための画期的なAIスイートです。 |

+

+

+  |

+ LiberSonora |

+ LiberSonoraは、「自由の声」を意味し、AIによって強化された強力なオープンソースのオーディオブックツールキットであり、インテリジェントな字幕抽出、AIタイトル生成、多言語翻訳などの機能を備え、GPUアクセラレーションとバッチオフライン処理をサポートしています。 |

+

+

+  |

+ Bob |

+ Bobは、任意のアプリで使用できるmacOSの翻訳およびOCRツールです。 |

+

+

+  |

+ AgenticFlow |

+ AgenticFlowは、マーケターがAIエージェントのためのエージェンティックAIワークフローを構築するためのノーコードプラットフォームであり、数百の毎日のアプリをツールとして使用します。 |

+

+

+  |

+ Alphaパイ |

+ AI投資研究エージェント/次世代の金融情報エントリーポイント。投資家を代理して会議に出席し、AI議事録を取るほか、金融投資情報の検索・質問応答やエージェント駆使した定量分析など、投資研究業務を支援します。 |

+

+

+  |

+ argo |

+ ローカルでダウンロードし、Mac、Windows、Linux 上でOllamaとHuggingfaceモデルをRAGで実行します。LLM APIもサポートしています。 |

+

+

+  |

+ PeterCat |

+ 会話型Q&Aエージェントの構成システム、自ホスト型デプロイメントソリューション、および便利なオールインワンアプリケーションSDKを提供し、GitHubリポジトリのためのインテリジェントQ&Aボットをワンクリックで作成し、さまざまな公式ウェブサイトやプロジェクトに迅速に統合し、コミュニティのためのより効率的な技術サポートエコシステムを提供します。 |

+

+

+  |

+ FastGPT |

+

+ FastGPT は大規模言語モデル(LLM)を基盤としたオープンソースAIナレッジベース構築プラットフォームで、DeepSeekやOpenAIなど様々なモデルをサポートしています。データ処理、モデル呼び出し、RAG検索、ビジュアルAIワークフロー設計などの導入即使用可能な機能を提供し、複雑なAIアプリケーションの構築を容易に実現します。

+ |

+

+

+  |

+ Chatgpt-on-Wechat |

+ ChatGPT-on-WeChat (CoW) プロジェクトは、DeepSeek、OpenAI、Claude、Qwen など複数の LLM を、WeChat 公式アカウント、企業微信、飛書、DingTalk、ウェブサイトなどの一般的なプラットフォームやオフィスソフトにシームレスに統合できる柔軟なチャットボットフレームワークです。また、豊富なカスタムプラグインもサポートしています。 |

+

+

+  |

+ TigerGPT |

+ TigerGPT は、OpenAI に基づく最初の金融 AI 投資アシスタントで、虎のグループによって開発されています。TigerGPT は、投資家に対して、深い推理をサポートするオンライン Q&A サービスを提供することを目的としています。2025年2月18日、TigerGPT は DeepSeek-R1 モデルを正式に統合し、ユーザーにオンライン Q&A サービスを提供することで、深い推理をサポートします。 |

+

+

+  |

+ HIX.AI |

+ DeepSeek を無料でお試しいただき、 HIX.AI で AI チャットを無制限にお楽しみください。AI チャット、ライティング、コーディングなどに DeepSeek R1 をご利用ください。今すぐ次世代の AI チャットを体験してください! |

+

+

+  |

+ Askanywhere |

+ どこでも単語を選択して、Deepseekとの会話を素早く開くことができる単語選択AIアシスタント! |

+

+

+  |

+ 1chat |

+ DeepSeek-R1モデルとローカルでチャットできるiOSアプリです。 |

+

+

+  |

+ 1つのアプリで250以上のテキスト、画像の大規模モデルにアクセス |

+ 1AI iOSチャットボットは250以上のテキスト、画像、音声モデルと統合されており、OpenRouterやReplicateの任意のモデル、Deepseek推論およびDeepseek V3モデルと話すことができます。 |

+

+

+  |

+ PopAi |

+ PopAiがDeepSeek R1を発表!PopAiで遅延のない、超高速なパフォーマンスをお楽しみください。 オンライン検索のオン/オフをシームレスに切り替え可能です。 |

+

+

+

+### AI エージェントフレームワーク

+

+

+

+

+

+

+

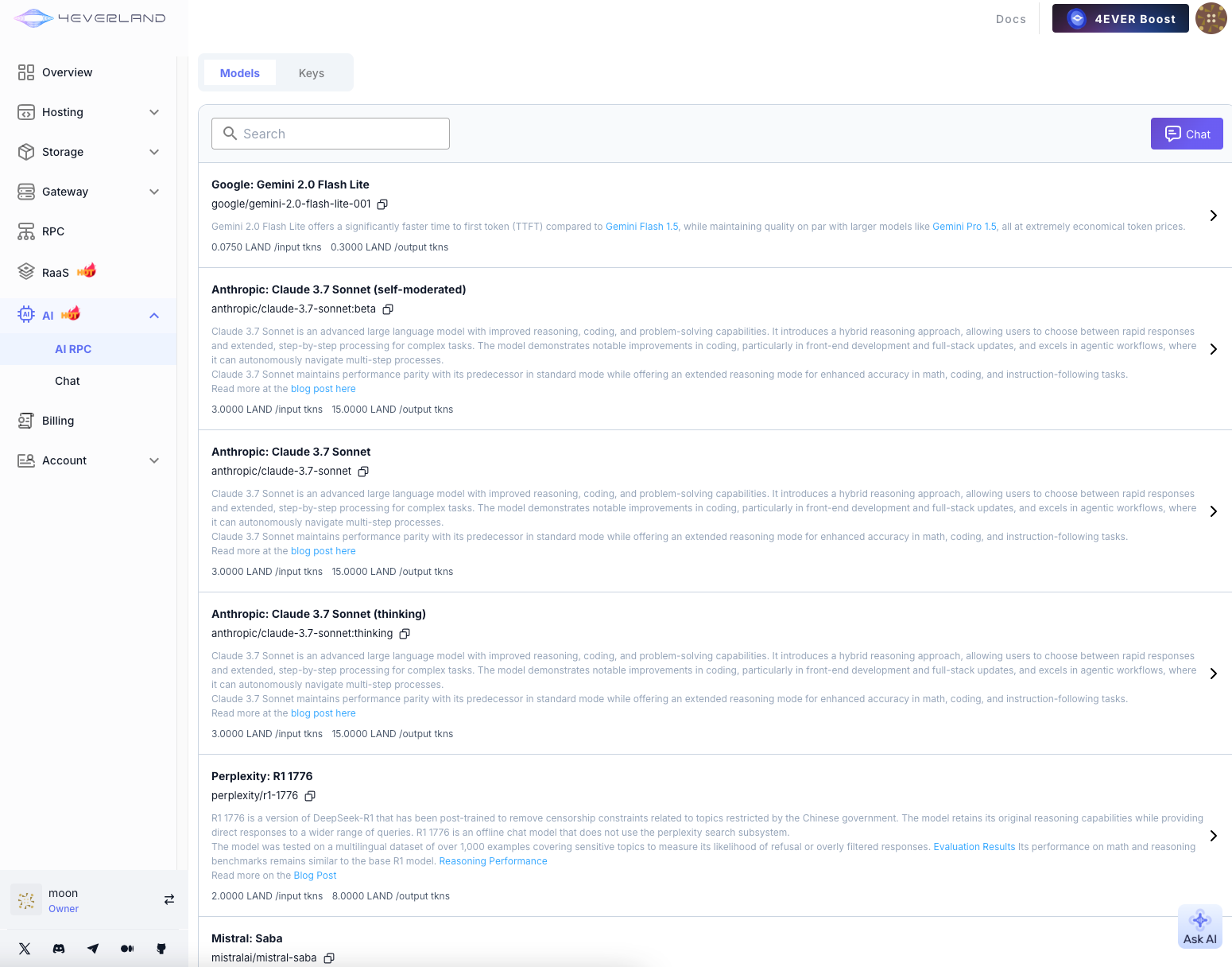

+#### 4EVER AI RPC

+

+

+

+#### 4EVER AI RPC

+

+ +

+

+

+## Add Deepseek Model

+

+

+

+

+

+## Add Deepseek Model

+

+ +

diff --git a/docs/4EVERChat/README_cn.md b/docs/4EVERChat/README_cn.md

new file mode 100644

index 0000000..c7c666a

--- /dev/null

+++ b/docs/4EVERChat/README_cn.md

@@ -0,0 +1,31 @@

+

+

diff --git a/docs/4EVERChat/README_cn.md b/docs/4EVERChat/README_cn.md

new file mode 100644

index 0000000..c7c666a

--- /dev/null

+++ b/docs/4EVERChat/README_cn.md

@@ -0,0 +1,31 @@

+ +

+

+

+ +

+

+

+

+

+ diff --git a/docs/Alpha派/README_cn.md b/docs/Alpha派/README_cn.md

new file mode 100644

index 0000000..7be27ec

--- /dev/null

+++ b/docs/Alpha派/README_cn.md

@@ -0,0 +1,13 @@

+

diff --git a/docs/Alpha派/README_cn.md b/docs/Alpha派/README_cn.md

new file mode 100644

index 0000000..7be27ec

--- /dev/null

+++ b/docs/Alpha派/README_cn.md

@@ -0,0 +1,13 @@

+ +

+

+

+# Coco AI - Connect & Collaborate

+

+Coco AI is a unified search platform that connects all your enterprise applications and data—Google Workspace, Dropbox, Confluent Wiki, GitHub, and more—into a single, powerful search interface. This repository contains the **COCO App**, built for both **desktop and mobile**. The app allows users to search and interact with their enterprise data across platforms.

+

+In addition, COCO offers a **Gen-AI Chat for Teams**—imagine **Deepseek** but tailored to your team’s unique knowledge and internal resources. COCO enhances collaboration by making information instantly accessible and providing AI-driven insights based on your enterprise's specific data.

+

+# UI

+

+

+

+

+

diff --git a/docs/Coco AI/README_cn.md b/docs/Coco AI/README_cn.md

new file mode 100644

index 0000000..6a58a2b

--- /dev/null

+++ b/docs/Coco AI/README_cn.md

@@ -0,0 +1,16 @@

+

+

+

+

+# Coco AI - Connect & Collaborate

+

+Coco AI is a unified search platform that connects all your enterprise applications and data—Google Workspace, Dropbox, Confluent Wiki, GitHub, and more—into a single, powerful search interface. This repository contains the **COCO App**, built for both **desktop and mobile**. The app allows users to search and interact with their enterprise data across platforms.

+

+In addition, COCO offers a **Gen-AI Chat for Teams**—imagine **Deepseek** but tailored to your team’s unique knowledge and internal resources. COCO enhances collaboration by making information instantly accessible and providing AI-driven insights based on your enterprise's specific data.

+

+# UI

+

+

+

+

+

diff --git a/docs/Coco AI/README_cn.md b/docs/Coco AI/README_cn.md

new file mode 100644

index 0000000..6a58a2b

--- /dev/null

+++ b/docs/Coco AI/README_cn.md

@@ -0,0 +1,16 @@

+ +

+ +

+ +

+ +

+ +

+

+

+

+👾 _**Alibaba International Digital Commerce**_ 👾

+

+:octocat: [**Github**](https://github.com/AIDC-AI/ComfyUI-Copilot)

+

+

+

+

+

+

+👾 _**Alibaba International Digital Commerce**_ 👾

+

+:octocat: [**Github**](https://github.com/AIDC-AI/ComfyUI-Copilot)

+

+ +

+ +

+- 💎 **Node Query System**: Dive deeper into nodes by exploring their explanations, parameter definitions, usage tips, and downstream workflow recommendations.

+

+

+- 💎 **Node Query System**: Dive deeper into nodes by exploring their explanations, parameter definitions, usage tips, and downstream workflow recommendations.

+ +

+- 💎 **Smart Workflow Assistance**: Automatically discern developer needs to recommend and build fitting workflow frameworks, minimizing manual setup time.

+

+

+- 💎 **Smart Workflow Assistance**: Automatically discern developer needs to recommend and build fitting workflow frameworks, minimizing manual setup time.

+ +

+- 💎 **Model Querying**: Prompt Copilot to seek foundational models and 'lora' based on requirements.

+- 💎 **Up-and-Coming Features**:

+

+ - **Automated Parameter Tuning**: Exploit machine learning algorithms for seamless analysis and optimization of critical workflow parameters.

+ - **Error Diagnosis and Fix Suggestions**: Receive comprehensive error insights and corrective advice to swiftly pinpoint and resolve issues.

+

+---

+

+## 🚀 Getting Started

+

+**Repository Overview**: Visit the [GitHub Repository](https://github.com/AIDC-AI/ComfyUI-Copilot) to access the complete codebase.

+

+1. **Installation**:

+

+ ```bash

+ cd ComfyUI/custom_nodes

+ git clone git@github.com:AIDC-AI/ComfyUI-Copilot.git

+ ```

+

+ or

+

+ ```bash

+ cd ComfyUI/custom_nodes

+ git clone https://github.com/AIDC-AI/ComfyUI-Copilot

+ ```

+2. **Activation**: After running the ComfyUI project, find the Copilot activation button at the top-right corner of the board to launch its service.

+

+

+- 💎 **Model Querying**: Prompt Copilot to seek foundational models and 'lora' based on requirements.

+- 💎 **Up-and-Coming Features**:

+

+ - **Automated Parameter Tuning**: Exploit machine learning algorithms for seamless analysis and optimization of critical workflow parameters.

+ - **Error Diagnosis and Fix Suggestions**: Receive comprehensive error insights and corrective advice to swiftly pinpoint and resolve issues.

+

+---

+

+## 🚀 Getting Started

+

+**Repository Overview**: Visit the [GitHub Repository](https://github.com/AIDC-AI/ComfyUI-Copilot) to access the complete codebase.

+

+1. **Installation**:

+

+ ```bash

+ cd ComfyUI/custom_nodes

+ git clone git@github.com:AIDC-AI/ComfyUI-Copilot.git

+ ```

+

+ or

+

+ ```bash

+ cd ComfyUI/custom_nodes

+ git clone https://github.com/AIDC-AI/ComfyUI-Copilot

+ ```

+2. **Activation**: After running the ComfyUI project, find the Copilot activation button at the top-right corner of the board to launch its service.

+ +<<<<<<< HEAD

+3. **KeyGeneration**:Enter your email address on the link, the api-key will automatically be sent to your email address later.

+=======

+

+3. **KeyGeneration**:Enter your name and email address on the link, and the api-key will automatically be sent to your email address later.

+>>>>>>> 62f0878737649971dd8c5e71c3c44e2328a38e74

+

+

+<<<<<<< HEAD

+3. **KeyGeneration**:Enter your email address on the link, the api-key will automatically be sent to your email address later.

+=======

+

+3. **KeyGeneration**:Enter your name and email address on the link, and the api-key will automatically be sent to your email address later.

+>>>>>>> 62f0878737649971dd8c5e71c3c44e2328a38e74

+

+ +

+---

+

+## 🤝 Contributions

+

+We welcome any form of contribution! Feel free to make issues, pull requests, or suggest new features.

+

+---

+

+## 📞 Contact Us

+

+For any queries or suggestions, please feel free to contact: ComfyUI-Copilot@service.alibaba.com.

+

+---

+

+## 📚 License

+

+This project is licensed under the MIT License - see the [LICENSE](https://opensource.org/licenses/MIT) file for details.

diff --git a/docs/ComfyUI-Copilot/assets/Framework.png b/docs/ComfyUI-Copilot/assets/Framework.png

new file mode 100644

index 0000000..400f0ba

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/Framework.png differ

diff --git a/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_recommend.gif b/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_recommend.gif

new file mode 100644

index 0000000..0b46c48

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_recommend.gif differ

diff --git a/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_search.gif b/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_search.gif

new file mode 100644

index 0000000..6793ca8

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_search.gif differ

diff --git a/docs/ComfyUI-Copilot/assets/keygen.png b/docs/ComfyUI-Copilot/assets/keygen.png

new file mode 100644

index 0000000..6dc1211

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/keygen.png differ

diff --git a/docs/ComfyUI-Copilot/assets/logo 2.png b/docs/ComfyUI-Copilot/assets/logo 2.png

new file mode 100644

index 0000000..179510c

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/logo 2.png differ

diff --git a/docs/ComfyUI-Copilot/assets/logo.png b/docs/ComfyUI-Copilot/assets/logo.png

new file mode 100644

index 0000000..3237ccf

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/logo.png differ

diff --git a/docs/ComfyUI-Copilot/assets/start.png b/docs/ComfyUI-Copilot/assets/start.png

new file mode 100644

index 0000000..10500b3

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/start.png differ

diff --git a/docs/ComfyUI-Copilot/assets/工作流检索.png b/docs/ComfyUI-Copilot/assets/工作流检索.png

new file mode 100644

index 0000000..6c9c862

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/工作流检索.png differ

diff --git a/docs/Geneplore AI/README.md b/docs/Geneplore AI/README.md

new file mode 100644

index 0000000..27719e0

--- /dev/null

+++ b/docs/Geneplore AI/README.md

@@ -0,0 +1,17 @@

+# [Geneplore AI](https://geneplore.com/bot)

+

+## Geneplore AI is building the world's easiest way to use AI - Use 50+ models, all on Discord

+

+Chat with the all-new Deepseek v3, GPT-4o, Claude 3 Opus, LLaMA 3, Gemini Pro, FLUX.1, and ChatGPT with **one bot**. Generate videos with Stable Diffusion Video, and images with the newest and most popular models available.

+

+Don't like how the bot responds? Simply change the model in *seconds* and continue chatting like normal, without adding another bot to your server. No more fiddling with API keys and webhooks - every model is completely integrated into the bot.

+

+**NEW:** Try the most powerful open AI model, Deepseek v3, for free with our bot. Simply type /chat and select Deepseek in the model list.

+

+

+

+Use the bot trusted by over 60,000 servers and hundreds of paying subscribers, without the hassle of multiple $20/month subscriptions and complicated programming.

+

+https://geneplore.com

+

+© 2025 Geneplore AI, All Rights Reserved.

diff --git a/docs/HIX.AI/assets/logo.svg b/docs/HIX.AI/assets/logo.svg

new file mode 100644

index 0000000..ee496fc

--- /dev/null

+++ b/docs/HIX.AI/assets/logo.svg

@@ -0,0 +1 @@

+

diff --git a/docs/Ncurator/README.md b/docs/Ncurator/README.md

new file mode 100644

index 0000000..9661cdd

--- /dev/null

+++ b/docs/Ncurator/README.md

@@ -0,0 +1,12 @@

+

+

+---

+

+## 🤝 Contributions

+

+We welcome any form of contribution! Feel free to make issues, pull requests, or suggest new features.

+

+---

+

+## 📞 Contact Us

+

+For any queries or suggestions, please feel free to contact: ComfyUI-Copilot@service.alibaba.com.

+

+---

+

+## 📚 License

+

+This project is licensed under the MIT License - see the [LICENSE](https://opensource.org/licenses/MIT) file for details.

diff --git a/docs/ComfyUI-Copilot/assets/Framework.png b/docs/ComfyUI-Copilot/assets/Framework.png

new file mode 100644

index 0000000..400f0ba

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/Framework.png differ

diff --git a/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_recommend.gif b/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_recommend.gif

new file mode 100644

index 0000000..0b46c48

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_recommend.gif differ

diff --git a/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_search.gif b/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_search.gif

new file mode 100644

index 0000000..6793ca8

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/comfycopilot_nodes_search.gif differ

diff --git a/docs/ComfyUI-Copilot/assets/keygen.png b/docs/ComfyUI-Copilot/assets/keygen.png

new file mode 100644

index 0000000..6dc1211

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/keygen.png differ

diff --git a/docs/ComfyUI-Copilot/assets/logo 2.png b/docs/ComfyUI-Copilot/assets/logo 2.png

new file mode 100644

index 0000000..179510c

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/logo 2.png differ

diff --git a/docs/ComfyUI-Copilot/assets/logo.png b/docs/ComfyUI-Copilot/assets/logo.png

new file mode 100644

index 0000000..3237ccf

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/logo.png differ

diff --git a/docs/ComfyUI-Copilot/assets/start.png b/docs/ComfyUI-Copilot/assets/start.png

new file mode 100644

index 0000000..10500b3

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/start.png differ

diff --git a/docs/ComfyUI-Copilot/assets/工作流检索.png b/docs/ComfyUI-Copilot/assets/工作流检索.png

new file mode 100644

index 0000000..6c9c862

Binary files /dev/null and b/docs/ComfyUI-Copilot/assets/工作流检索.png differ

diff --git a/docs/Geneplore AI/README.md b/docs/Geneplore AI/README.md

new file mode 100644

index 0000000..27719e0

--- /dev/null

+++ b/docs/Geneplore AI/README.md

@@ -0,0 +1,17 @@

+# [Geneplore AI](https://geneplore.com/bot)

+

+## Geneplore AI is building the world's easiest way to use AI - Use 50+ models, all on Discord

+

+Chat with the all-new Deepseek v3, GPT-4o, Claude 3 Opus, LLaMA 3, Gemini Pro, FLUX.1, and ChatGPT with **one bot**. Generate videos with Stable Diffusion Video, and images with the newest and most popular models available.

+

+Don't like how the bot responds? Simply change the model in *seconds* and continue chatting like normal, without adding another bot to your server. No more fiddling with API keys and webhooks - every model is completely integrated into the bot.

+

+**NEW:** Try the most powerful open AI model, Deepseek v3, for free with our bot. Simply type /chat and select Deepseek in the model list.

+

+

+

+Use the bot trusted by over 60,000 servers and hundreds of paying subscribers, without the hassle of multiple $20/month subscriptions and complicated programming.

+

+https://geneplore.com

+

+© 2025 Geneplore AI, All Rights Reserved.

diff --git a/docs/HIX.AI/assets/logo.svg b/docs/HIX.AI/assets/logo.svg

new file mode 100644

index 0000000..ee496fc

--- /dev/null

+++ b/docs/HIX.AI/assets/logo.svg

@@ -0,0 +1 @@

+

diff --git a/docs/Ncurator/README.md b/docs/Ncurator/README.md

new file mode 100644

index 0000000..9661cdd

--- /dev/null

+++ b/docs/Ncurator/README.md

@@ -0,0 +1,12 @@

+ +

+# [Ncurator](https://www.ncurator.com)

+

+Knowledge Base AI Q&A Assistant -

+Let AI help you organize and analyze knowledge

+

+## UI

+

+

+# [Ncurator](https://www.ncurator.com)

+

+Knowledge Base AI Q&A Assistant -

+Let AI help you organize and analyze knowledge

+

+## UI

+ +

+## Integrate with Deepseek API

+

+

+## Integrate with Deepseek API

+ \ No newline at end of file

diff --git a/docs/Ncurator/README_cn.md b/docs/Ncurator/README_cn.md

new file mode 100644

index 0000000..8b9f87b

--- /dev/null

+++ b/docs/Ncurator/README_cn.md

@@ -0,0 +1,11 @@

+

\ No newline at end of file

diff --git a/docs/Ncurator/README_cn.md b/docs/Ncurator/README_cn.md

new file mode 100644

index 0000000..8b9f87b

--- /dev/null

+++ b/docs/Ncurator/README_cn.md

@@ -0,0 +1,11 @@

+ +

+## 配置 Deepseek API

+

+

+## 配置 Deepseek API

+ +

+___

+

+# [agentUniverse](https://github.com/antgroup/agentUniverse)

+

+agentUniverse is a multi-agent collaboration framework designed for complex business scenarios. It offers rapid and user-friendly development capabilities for LLM agent applications, with a focus on mechanisms such as agent collaborative scheduling, autonomous decision-making, and dynamic feedback. The framework originates from Ant Group's real-world business practices in the financial industry. In June 2024, agentUniverse achieved full integration support for the DeepSeek series of models.

+

+## Concepts

+

+

+

+___

+

+# [agentUniverse](https://github.com/antgroup/agentUniverse)

+

+agentUniverse is a multi-agent collaboration framework designed for complex business scenarios. It offers rapid and user-friendly development capabilities for LLM agent applications, with a focus on mechanisms such as agent collaborative scheduling, autonomous decision-making, and dynamic feedback. The framework originates from Ant Group's real-world business practices in the financial industry. In June 2024, agentUniverse achieved full integration support for the DeepSeek series of models.

+

+## Concepts

+

+ +

+## Integrate with Deepseek API

+

+### Configure via Python code

+```python

+import os

+os.environ['DEEPSEEK_API_KEY'] = 'sk-***'

+os.environ['DEEPSEEK_API_BASE'] = 'https://xxxxxx'

+```

+### Configure via the configuration file

+In the custom_key.toml file under the config directory of the project, add the configuration:

+```toml

+DEEPSEEK_API_KEY="sk-******"

+DEEPSEEK_API_BASE="https://xxxxxx"

+```

+

+For more details, please refer to the [Documentation: DeepSeek Integration](https://github.com/antgroup/agentUniverse/blob/master/docs/guidebook/en/In-Depth_Guides/Components/LLMs/DeepSeek_LLM_Use.md)

+

diff --git a/docs/agentUniverse/README_cn.md b/docs/agentUniverse/README_cn.md

new file mode 100644

index 0000000..956188e

--- /dev/null

+++ b/docs/agentUniverse/README_cn.md

@@ -0,0 +1,32 @@

+___

+

+

+## Integrate with Deepseek API

+

+### Configure via Python code

+```python

+import os

+os.environ['DEEPSEEK_API_KEY'] = 'sk-***'

+os.environ['DEEPSEEK_API_BASE'] = 'https://xxxxxx'

+```

+### Configure via the configuration file

+In the custom_key.toml file under the config directory of the project, add the configuration:

+```toml

+DEEPSEEK_API_KEY="sk-******"

+DEEPSEEK_API_BASE="https://xxxxxx"

+```

+

+For more details, please refer to the [Documentation: DeepSeek Integration](https://github.com/antgroup/agentUniverse/blob/master/docs/guidebook/en/In-Depth_Guides/Components/LLMs/DeepSeek_LLM_Use.md)

+

diff --git a/docs/agentUniverse/README_cn.md b/docs/agentUniverse/README_cn.md

new file mode 100644

index 0000000..956188e

--- /dev/null

+++ b/docs/agentUniverse/README_cn.md

@@ -0,0 +1,32 @@

+___

+ +

+## 在项目中使用 deepseek API

+

+### 通过python代码配置

+必须配置:DEEPSEEK_API_KEY

+可选配置:DEEPSEEK_API_BASE

+```python

+import os

+os.environ['DEEPSEEK_API_KEY'] = 'sk-***'

+os.environ['DEEPSEEK_API_BASE'] = 'https://xxxxxx'

+```

+### 通过配置文件配置

+在项目的config目录下的custom_key.toml当中,添加配置:

+```toml

+DEEPSEEK_API_KEY="sk-******"

+DEEPSEEK_API_BASE="https://xxxxxx"

+```

+

+更多使用详情可参阅[官方文档:DeepSeek接入使用](https://github.com/antgroup/agentUniverse/blob/master/docs/guidebook/zh/In-Depth_Guides/%E7%BB%84%E4%BB%B6%E5%88%97%E8%A1%A8/%E6%A8%A1%E5%9E%8B%E5%88%97%E8%A1%A8/DeepSeek%E4%BD%BF%E7%94%A8.md)

+

diff --git a/docs/agentUniverse/README_ja.md b/docs/agentUniverse/README_ja.md

new file mode 100644

index 0000000..336e4f6

--- /dev/null

+++ b/docs/agentUniverse/README_ja.md

@@ -0,0 +1,30 @@

+___

+

+

+## 在项目中使用 deepseek API

+

+### 通过python代码配置

+必须配置:DEEPSEEK_API_KEY

+可选配置:DEEPSEEK_API_BASE

+```python

+import os

+os.environ['DEEPSEEK_API_KEY'] = 'sk-***'

+os.environ['DEEPSEEK_API_BASE'] = 'https://xxxxxx'

+```

+### 通过配置文件配置

+在项目的config目录下的custom_key.toml当中,添加配置:

+```toml

+DEEPSEEK_API_KEY="sk-******"

+DEEPSEEK_API_BASE="https://xxxxxx"

+```

+

+更多使用详情可参阅[官方文档:DeepSeek接入使用](https://github.com/antgroup/agentUniverse/blob/master/docs/guidebook/zh/In-Depth_Guides/%E7%BB%84%E4%BB%B6%E5%88%97%E8%A1%A8/%E6%A8%A1%E5%9E%8B%E5%88%97%E8%A1%A8/DeepSeek%E4%BD%BF%E7%94%A8.md)

+

diff --git a/docs/agentUniverse/README_ja.md b/docs/agentUniverse/README_ja.md

new file mode 100644

index 0000000..336e4f6

--- /dev/null

+++ b/docs/agentUniverse/README_ja.md

@@ -0,0 +1,30 @@

+___

+ +

+ +

+ +

+ +

+### 2. Convenient Content Management

+- One-click save AI conversation content to notes

+- Support for various export formats:

+ - Markdown

+ - Word documents

+ - PDF files

+ - PPT presentations

+ - Resume templates

+ and more formats

+

+### 3. Upcoming Features

+- Task management list

+- To-do tracking

+- Calendar management

+- More intelligent features

+- Multi-platform clients

+

+## Usage Guide

+

+#### Method 1: Search

+1. Search for "RuZhi AI Notes" in search engines

+2. Register

+

+#### Method 2: Direct Link Access

+1. Visit our official link https://ruzhiai.perfcloud.cn/

+2. Complete the registration process

+ - The system will automatically create an application for you after registration

+ - You can then start using all features

diff --git a/docs/ruzhiai_note/README_cn.md b/docs/ruzhiai_note/README_cn.md

new file mode 100644

index 0000000..1690729

--- /dev/null

+++ b/docs/ruzhiai_note/README_cn.md

@@ -0,0 +1,49 @@

+

+

+### 2. Convenient Content Management

+- One-click save AI conversation content to notes

+- Support for various export formats:

+ - Markdown

+ - Word documents

+ - PDF files

+ - PPT presentations

+ - Resume templates

+ and more formats

+

+### 3. Upcoming Features

+- Task management list

+- To-do tracking

+- Calendar management

+- More intelligent features

+- Multi-platform clients

+

+## Usage Guide

+

+#### Method 1: Search

+1. Search for "RuZhi AI Notes" in search engines

+2. Register

+

+#### Method 2: Direct Link Access

+1. Visit our official link https://ruzhiai.perfcloud.cn/

+2. Complete the registration process

+ - The system will automatically create an application for you after registration

+ - You can then start using all features

diff --git a/docs/ruzhiai_note/README_cn.md b/docs/ruzhiai_note/README_cn.md

new file mode 100644

index 0000000..1690729

--- /dev/null

+++ b/docs/ruzhiai_note/README_cn.md

@@ -0,0 +1,49 @@

+ +

+---

+

+An open-source toolkit for connecting AI agents to Solana protocols. Now, any agent, using any model can autonomously perform 60+ Solana actions:

+

+

+## STEP1.

+

+Apply a token from [deepseek open platform](https://platform.deepseek.com/)

+

+## STEP 2

+

+Intialize the DeepSeek LLM

+

+```

+import { ChatDeepSeek } from "@langchain/deepseek";

+

+const deepseek = new ChatDeepSeek({

+ model: "deepseek-chat",

+ temperature: 0,

+});

+```

+

+## STEP 3

+

+Intialize the Solana Agent Kit with DeepSeek

+

+```typescript

+const solanaAgent = new SolanaAgentKit(

+ process.env.SOLANA_PRIVATE_KEY!,

+ process.env.RPC_URL!,

+ {

+ OPENAI_API_KEY: process.env.OPENAI_API_KEY!,

+ HELIUS_API_KEY: process.env.HELIUS_API_KEY!,

+ PERPLEXITY_API_KEY: process.env.PERPLEXITY_API_KEY!,

+ },

+);

+

+const tools = createSolanaTools(solanaAgent);

+

+const agent = createReactAgent({

+ llm: deepseek,

+ tools,

+ checkpointSaver: memory,

+ messageModifier: `

+ You are a helpful agent that can interact onchain using the Solana Agent Kit. You are

+ empowered to interact onchain using your tools. If you ever need funds, you can request them from the

+ faucet. If not, you can provide your wallet details and request funds from the user. If there is a 5XX

+ (internal) HTTP error code, ask the user to try again later. If someone asks you to do something you

+ can't do with your currently available tools, you must say so, and encourage them to implement it

+ themselves using the Solana Agent Kit, recommend they go to https://www.solanaagentkit.xyz for more information. Be

+ concise and helpful with your responses. Refrain from restating your tools' descriptions unless it is explicitly requested.

+ `,

+});

+

+const stream = await agent.stream(

+ { messages: [new HumanMessage(userInput)] },

+ config,

+);

+```

+

+More guides can be found in the [Solana Agent Kit](https://docs.solanaagentkit.xyz/v0/introduction)

+

+

\ No newline at end of file

diff --git a/docs/solana-agent-kit/assets/sendai-logo.png b/docs/solana-agent-kit/assets/sendai-logo.png

new file mode 100644

index 0000000..638b962

Binary files /dev/null and b/docs/solana-agent-kit/assets/sendai-logo.png differ

diff --git a/docs/stranslate/README.md b/docs/stranslate/README.md

new file mode 100644

index 0000000..3d4c3fd

--- /dev/null

+++ b/docs/stranslate/README.md

@@ -0,0 +1,31 @@

+

+

+---

+

+An open-source toolkit for connecting AI agents to Solana protocols. Now, any agent, using any model can autonomously perform 60+ Solana actions:

+

+

+## STEP1.

+

+Apply a token from [deepseek open platform](https://platform.deepseek.com/)

+

+## STEP 2

+

+Intialize the DeepSeek LLM

+

+```

+import { ChatDeepSeek } from "@langchain/deepseek";

+

+const deepseek = new ChatDeepSeek({

+ model: "deepseek-chat",

+ temperature: 0,

+});

+```

+

+## STEP 3

+

+Intialize the Solana Agent Kit with DeepSeek

+

+```typescript

+const solanaAgent = new SolanaAgentKit(

+ process.env.SOLANA_PRIVATE_KEY!,

+ process.env.RPC_URL!,

+ {

+ OPENAI_API_KEY: process.env.OPENAI_API_KEY!,

+ HELIUS_API_KEY: process.env.HELIUS_API_KEY!,

+ PERPLEXITY_API_KEY: process.env.PERPLEXITY_API_KEY!,

+ },

+);

+

+const tools = createSolanaTools(solanaAgent);

+

+const agent = createReactAgent({

+ llm: deepseek,

+ tools,

+ checkpointSaver: memory,

+ messageModifier: `

+ You are a helpful agent that can interact onchain using the Solana Agent Kit. You are

+ empowered to interact onchain using your tools. If you ever need funds, you can request them from the

+ faucet. If not, you can provide your wallet details and request funds from the user. If there is a 5XX

+ (internal) HTTP error code, ask the user to try again later. If someone asks you to do something you

+ can't do with your currently available tools, you must say so, and encourage them to implement it

+ themselves using the Solana Agent Kit, recommend they go to https://www.solanaagentkit.xyz for more information. Be

+ concise and helpful with your responses. Refrain from restating your tools' descriptions unless it is explicitly requested.

+ `,

+});

+

+const stream = await agent.stream(

+ { messages: [new HumanMessage(userInput)] },

+ config,

+);

+```

+

+More guides can be found in the [Solana Agent Kit](https://docs.solanaagentkit.xyz/v0/introduction)

+

+

\ No newline at end of file

diff --git a/docs/solana-agent-kit/assets/sendai-logo.png b/docs/solana-agent-kit/assets/sendai-logo.png

new file mode 100644

index 0000000..638b962

Binary files /dev/null and b/docs/solana-agent-kit/assets/sendai-logo.png differ

diff --git a/docs/stranslate/README.md b/docs/stranslate/README.md

new file mode 100644

index 0000000..3d4c3fd

--- /dev/null

+++ b/docs/stranslate/README.md

@@ -0,0 +1,31 @@

+

+

+# [xhai Browser](https://www.dahai123.top/)

+

+

+[xhai Browser](https://m.malink.cn/s/7JFfIv) is an Android desktop management & AI browser, DeepSeek is the default AI dialog engine.

+He has the ultimate performance (0.2 seconds to start), slim size (apk 3M), no ads, ultra-fast ad blocking, multi-screen classification, screen navigation, multi-search box, a box multiple search!

+

+xhai means "xiaohai" in Chinese, which means "extreme, high-performance, AI Browser" in English.

+

+## UI

+

+

+

+# [xhai Browser](https://www.dahai123.top/)

+

+

+[xhai Browser](https://m.malink.cn/s/7JFfIv) is an Android desktop management & AI browser, DeepSeek is the default AI dialog engine.

+He has the ultimate performance (0.2 seconds to start), slim size (apk 3M), no ads, ultra-fast ad blocking, multi-screen classification, screen navigation, multi-search box, a box multiple search!

+

+xhai means "xiaohai" in Chinese, which means "extreme, high-performance, AI Browser" in English.

+

+## UI

+

+ +

+ diff --git a/docs/xhai_browser/README_cn.md b/docs/xhai_browser/README_cn.md

new file mode 100644

index 0000000..0789078

--- /dev/null

+++ b/docs/xhai_browser/README_cn.md

@@ -0,0 +1,15 @@

+

diff --git a/docs/xhai_browser/README_cn.md b/docs/xhai_browser/README_cn.md

new file mode 100644

index 0000000..0789078

--- /dev/null

+++ b/docs/xhai_browser/README_cn.md

@@ -0,0 +1,15 @@

+